classification—function to perform classification analysis and return results to the user.

Classification.Rdclassification—function to perform classification analysis and return results to the user.

Arguments

- data

a data set that includes classification data. For example, the Carseats data in the ISLR package

- colnum

the number of the column. For example, in the Carseats data this is column 7, ShelveLoc with three values, Good, Medium and Bad

- numresamples

the number of times to resample the analysis

- do_you_have_new_data

asks if the user has new data to be analyzed using the trained models that were just developed

- how_to_handle_strings

Converts strings to factor levels

- save_all_trained_models

Gives the user the option to save all trained models in the Environment

- use_parallel

"Y" or "N" for parallel processing

- train_amount

set the amount for the training data

- test_amount

set the amount for the testing data

- validation_amount

Set the amount for the validation data

Value

a full analysis, including data visualizations, statistical summaries, and a full report on the results of 35 models on the data

Examples

Classification(data = Carseats,

colnum = 7,

numresamples = 2,

do_you_have_new_data = "N",

how_to_handle_strings = 1,

save_all_trained_models = "N",

use_parallel = "N",

train_amount = 0.60,

test_amount = 0.20,

validation_amount = 0.20)

#> [1]

#> [1] "Resampling number 1 of 2,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 2 of 2,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> $Final_results

#>

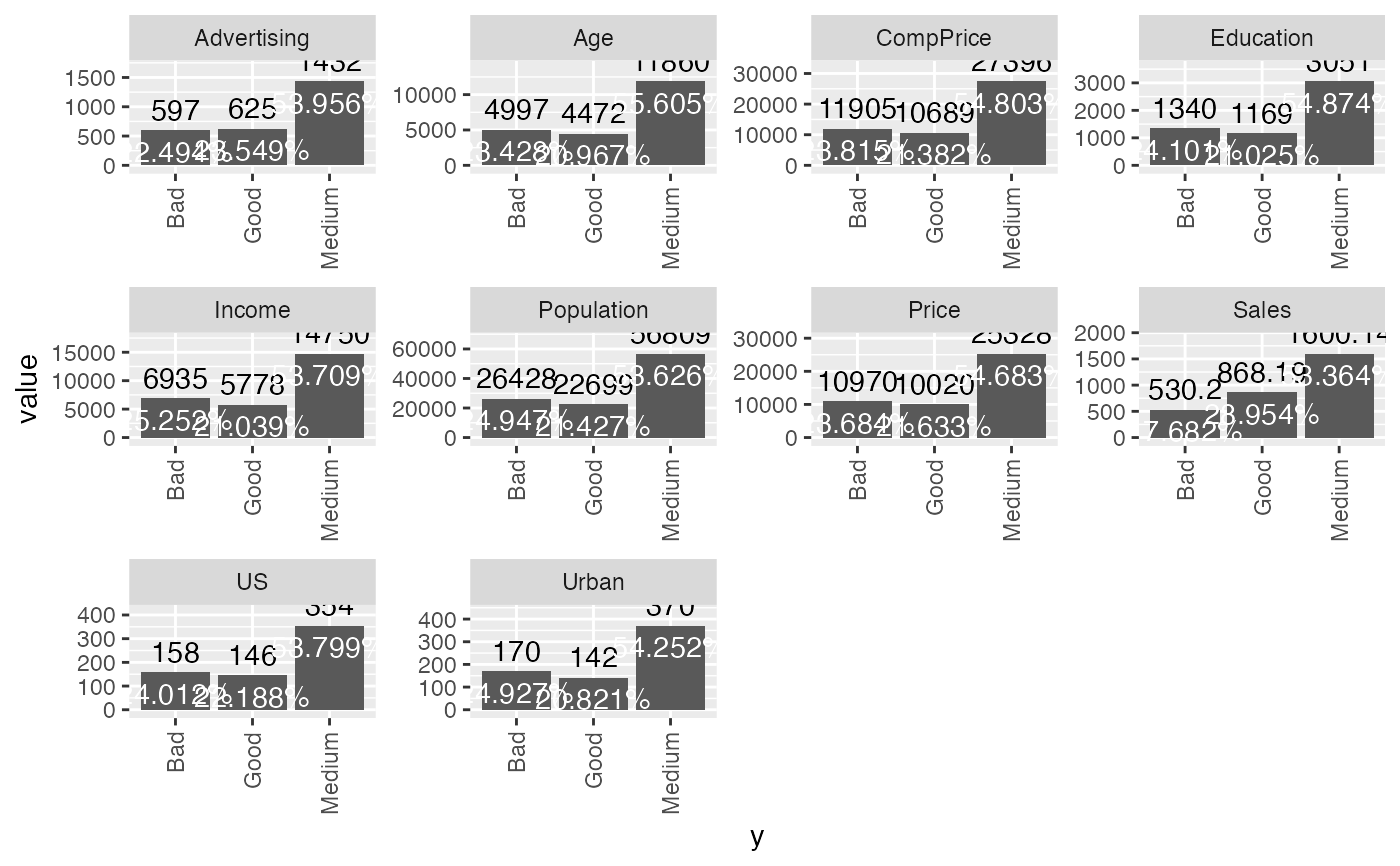

#> $Barchart

#> [1]

#> [1] "Resampling number 1 of 2,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 2 of 2,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> $Final_results

#>

#> $Barchart

#>

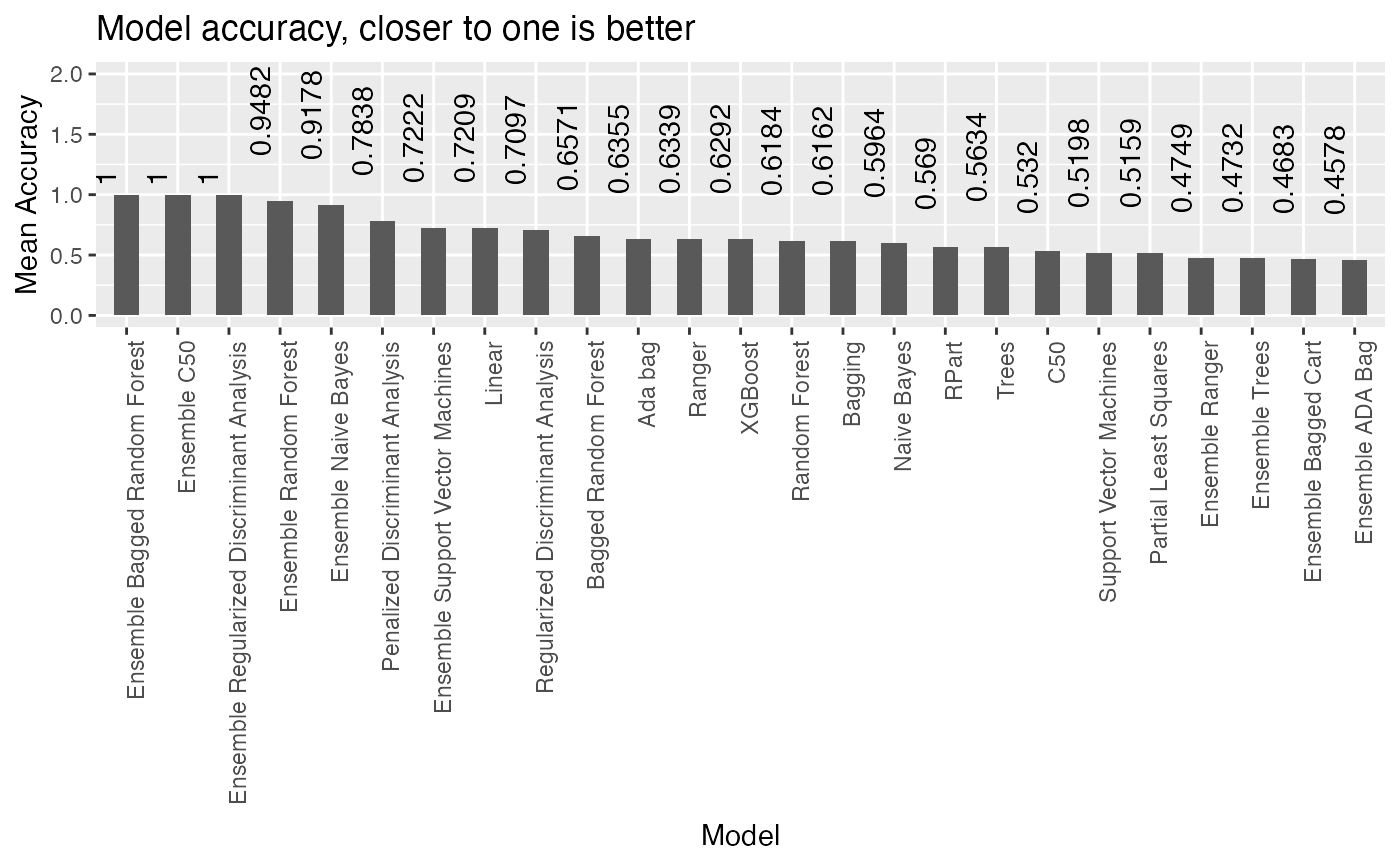

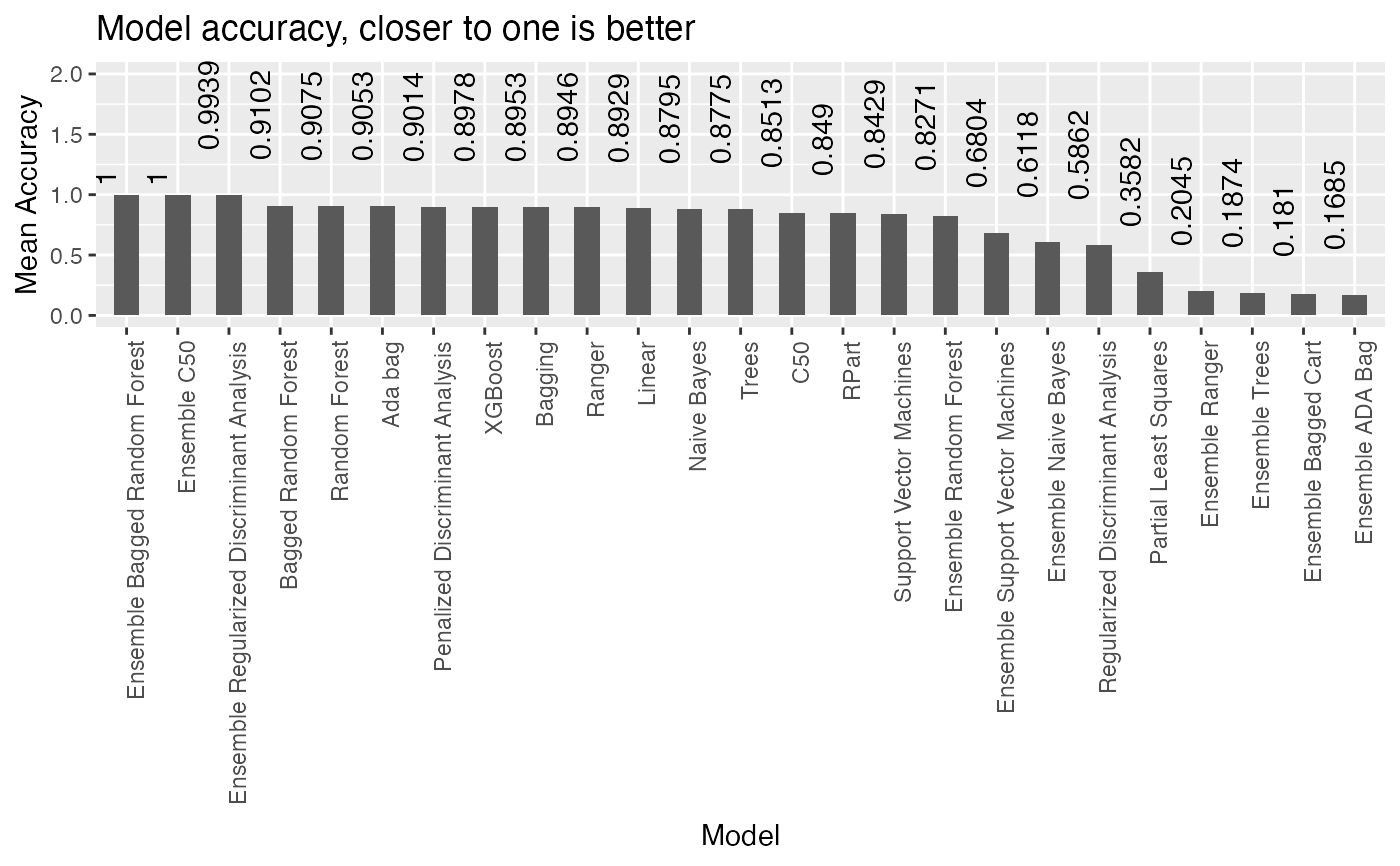

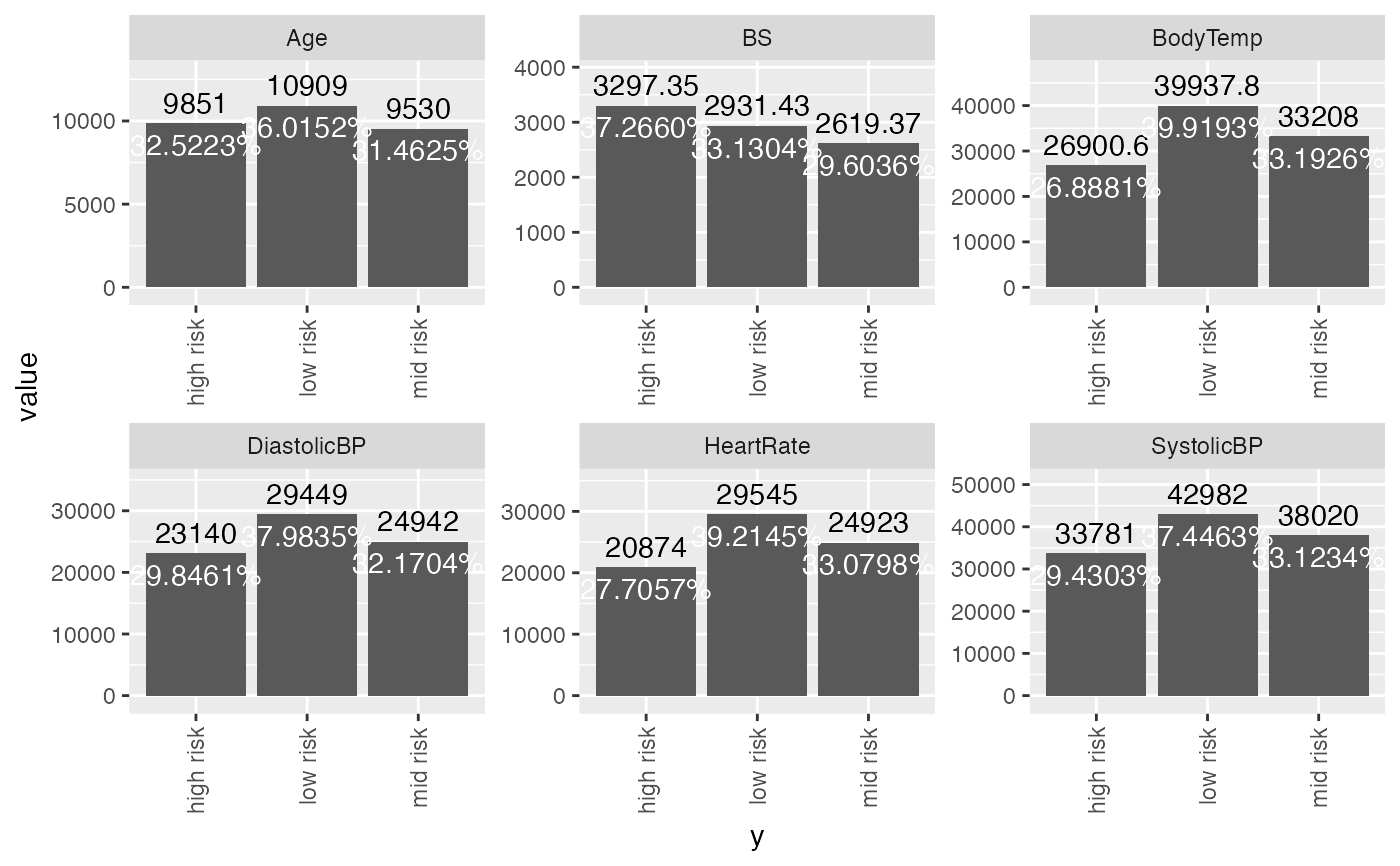

#> $Accuracy_Barchart

#>

#> $Accuracy_Barchart

#>

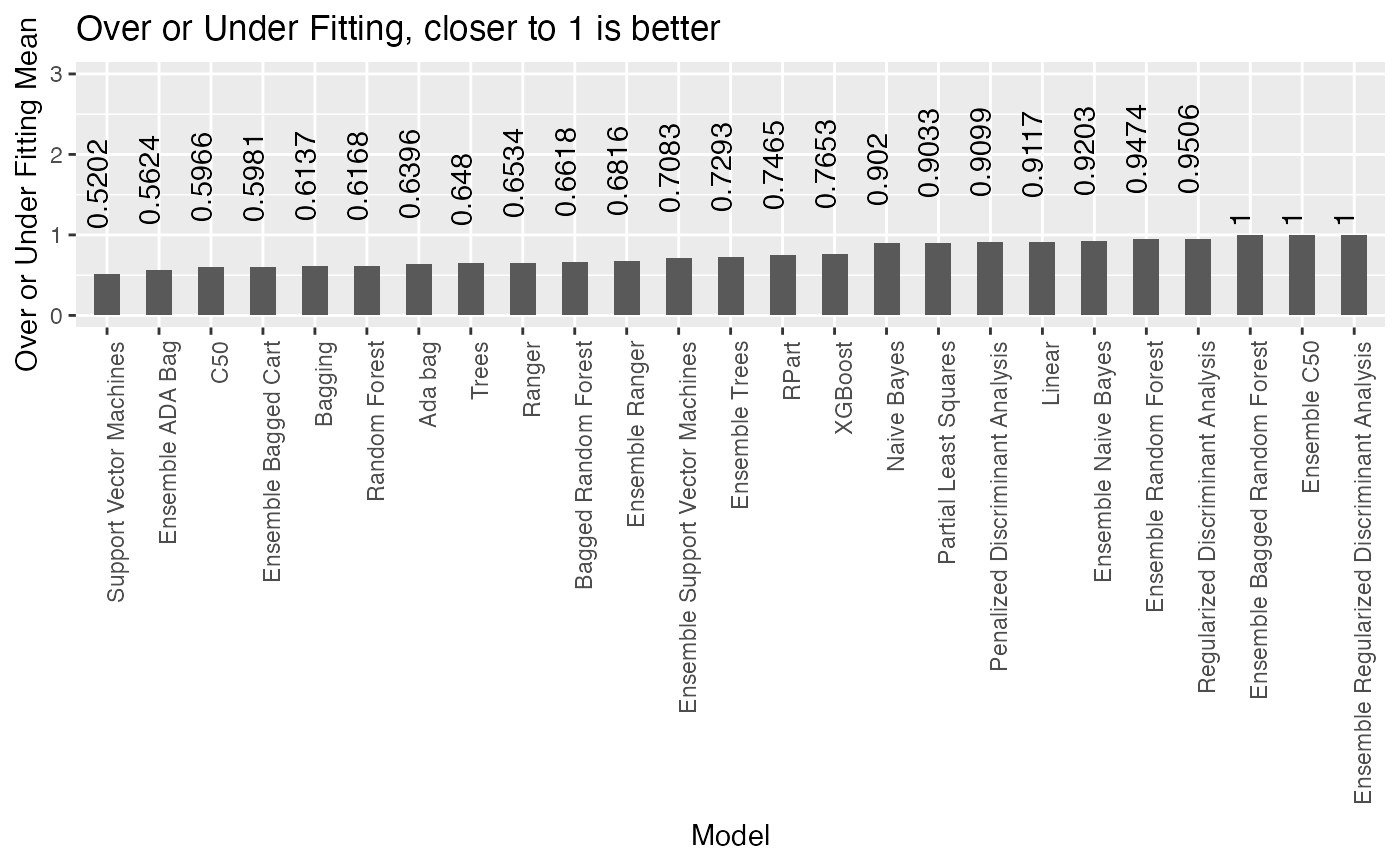

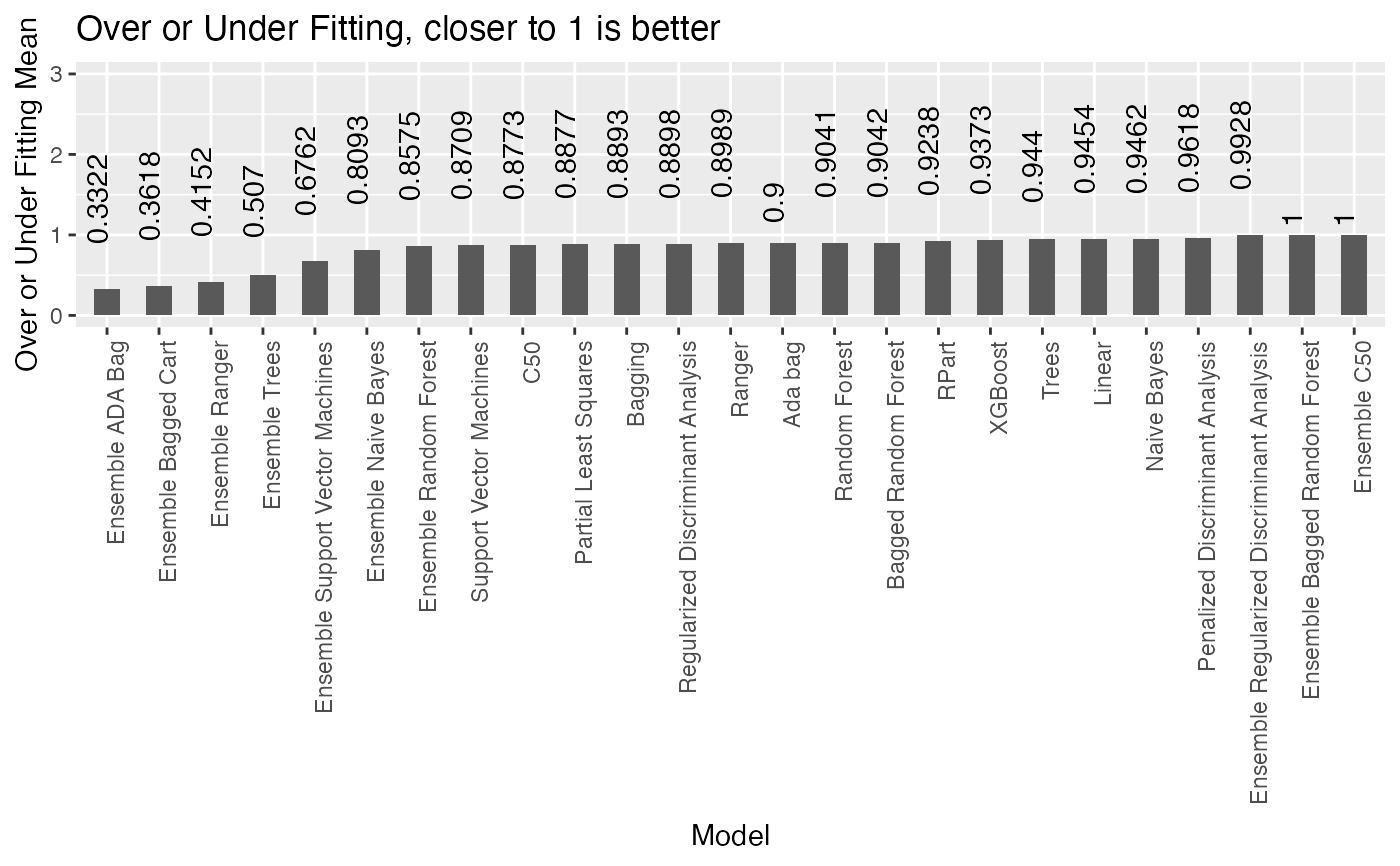

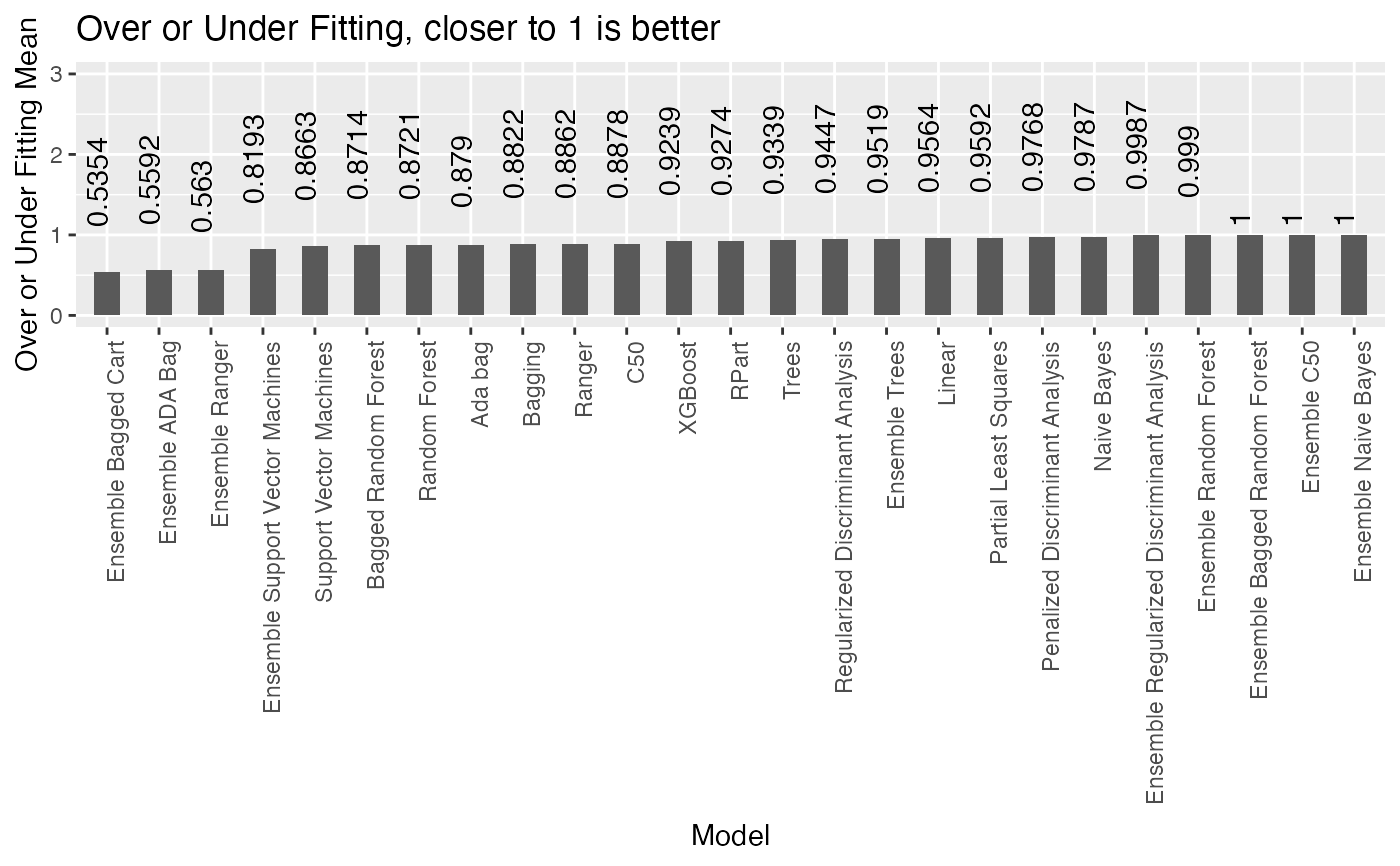

#> $Overfitting_barchart

#>

#> $Overfitting_barchart

#>

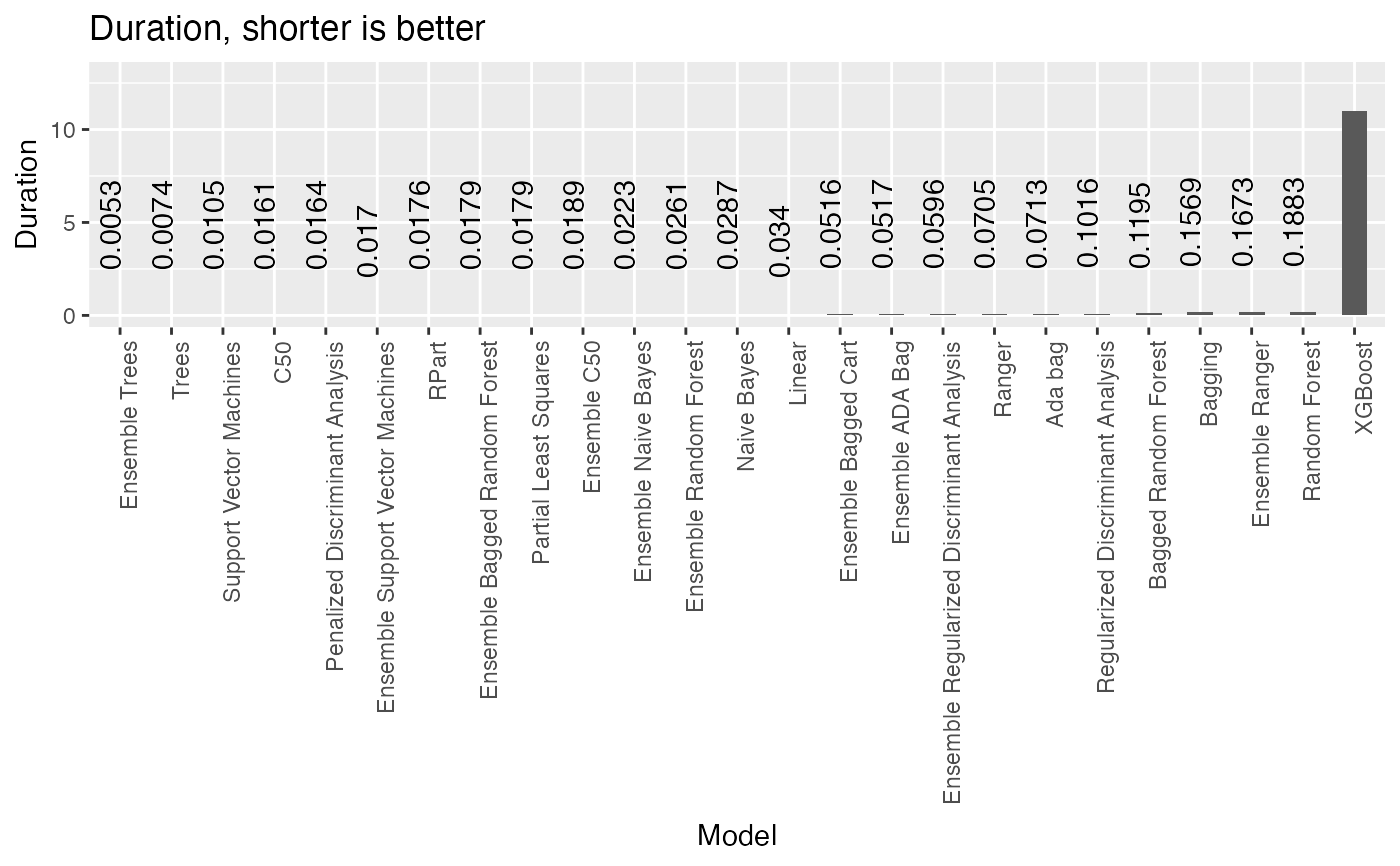

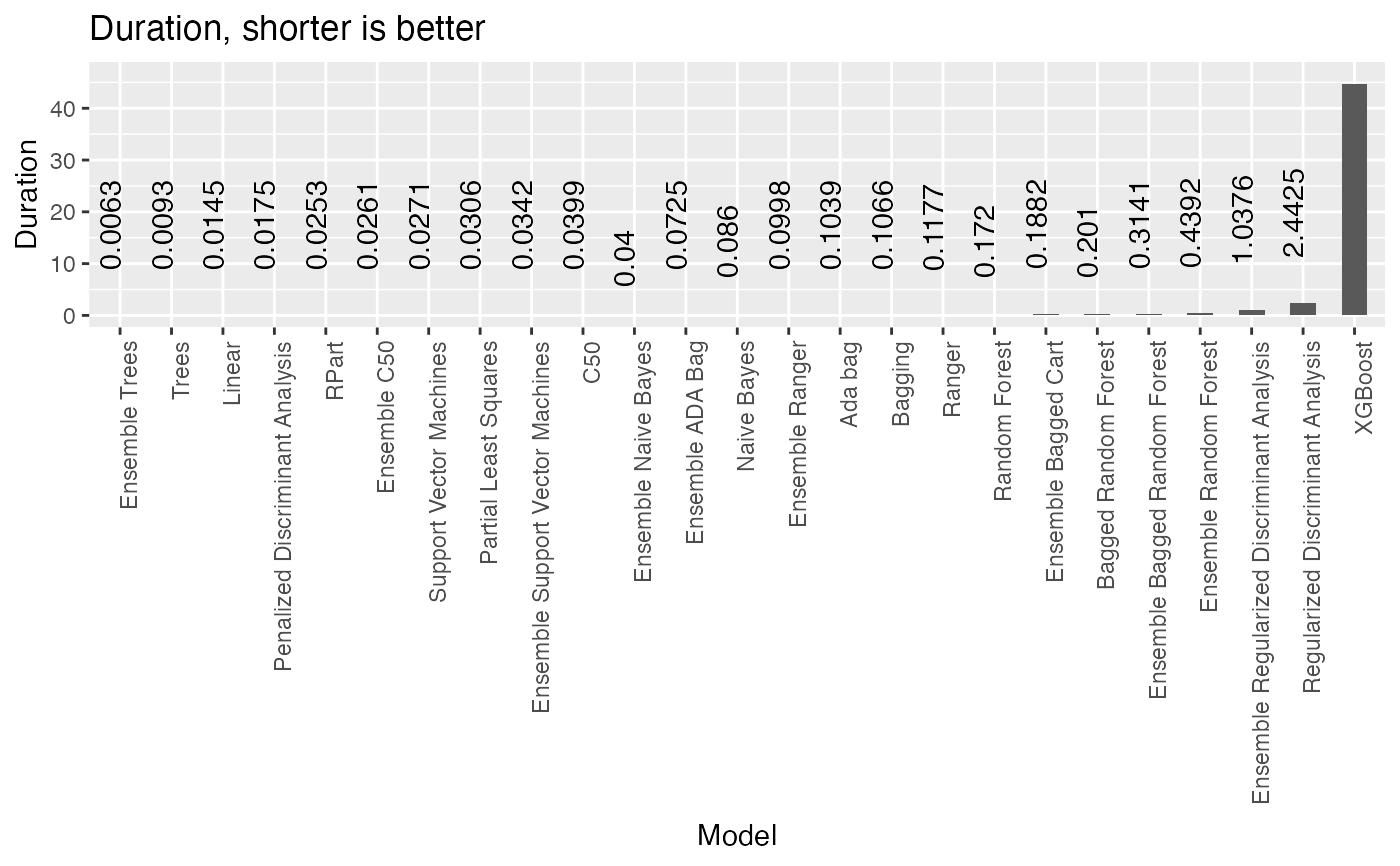

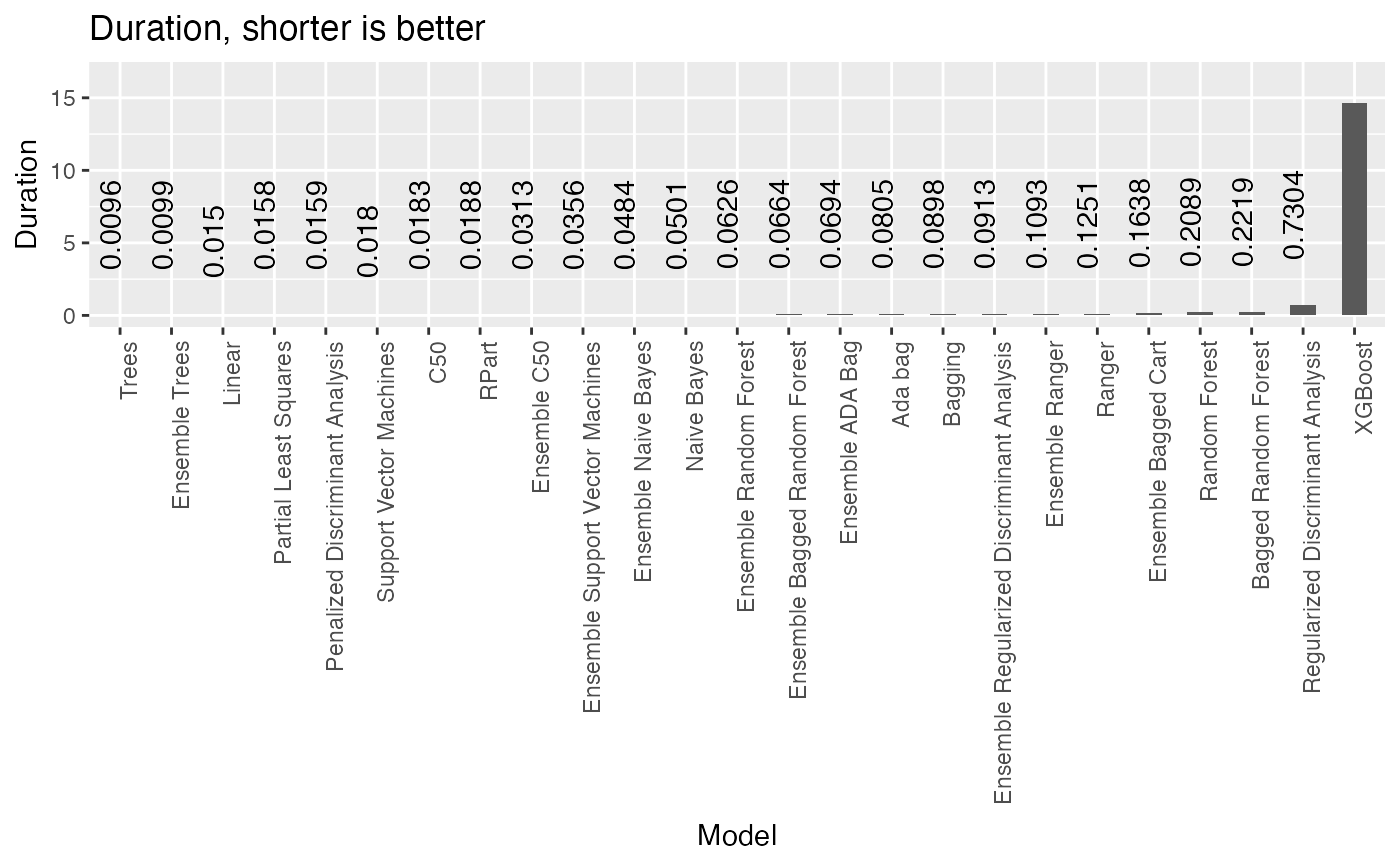

#> $Duration_barchart

#>

#> $Duration_barchart

#>

#> $Data_summary

#> Sales CompPrice Income Advertising

#> Min. : 0.000 Min. : 77 Min. : 21.00 Min. : 0.000

#> 1st Qu.: 5.390 1st Qu.:115 1st Qu.: 42.75 1st Qu.: 0.000

#> Median : 7.490 Median :125 Median : 69.00 Median : 5.000

#> Mean : 7.496 Mean :125 Mean : 68.66 Mean : 6.635

#> 3rd Qu.: 9.320 3rd Qu.:135 3rd Qu.: 91.00 3rd Qu.:12.000

#> Max. :16.270 Max. :175 Max. :120.00 Max. :29.000

#> Population Price Age Education Urban

#> Min. : 10.0 Min. : 24.0 Min. :25.00 Min. :10.0 No :118

#> 1st Qu.:139.0 1st Qu.:100.0 1st Qu.:39.75 1st Qu.:12.0 Yes:282

#> Median :272.0 Median :117.0 Median :54.50 Median :14.0

#> Mean :264.8 Mean :115.8 Mean :53.32 Mean :13.9

#> 3rd Qu.:398.5 3rd Qu.:131.0 3rd Qu.:66.00 3rd Qu.:16.0

#> Max. :509.0 Max. :191.0 Max. :80.00 Max. :18.0

#> US y

#> No :142 Bad : 96

#> Yes:258 Good : 85

#> Medium:219

#>

#>

#>

#>

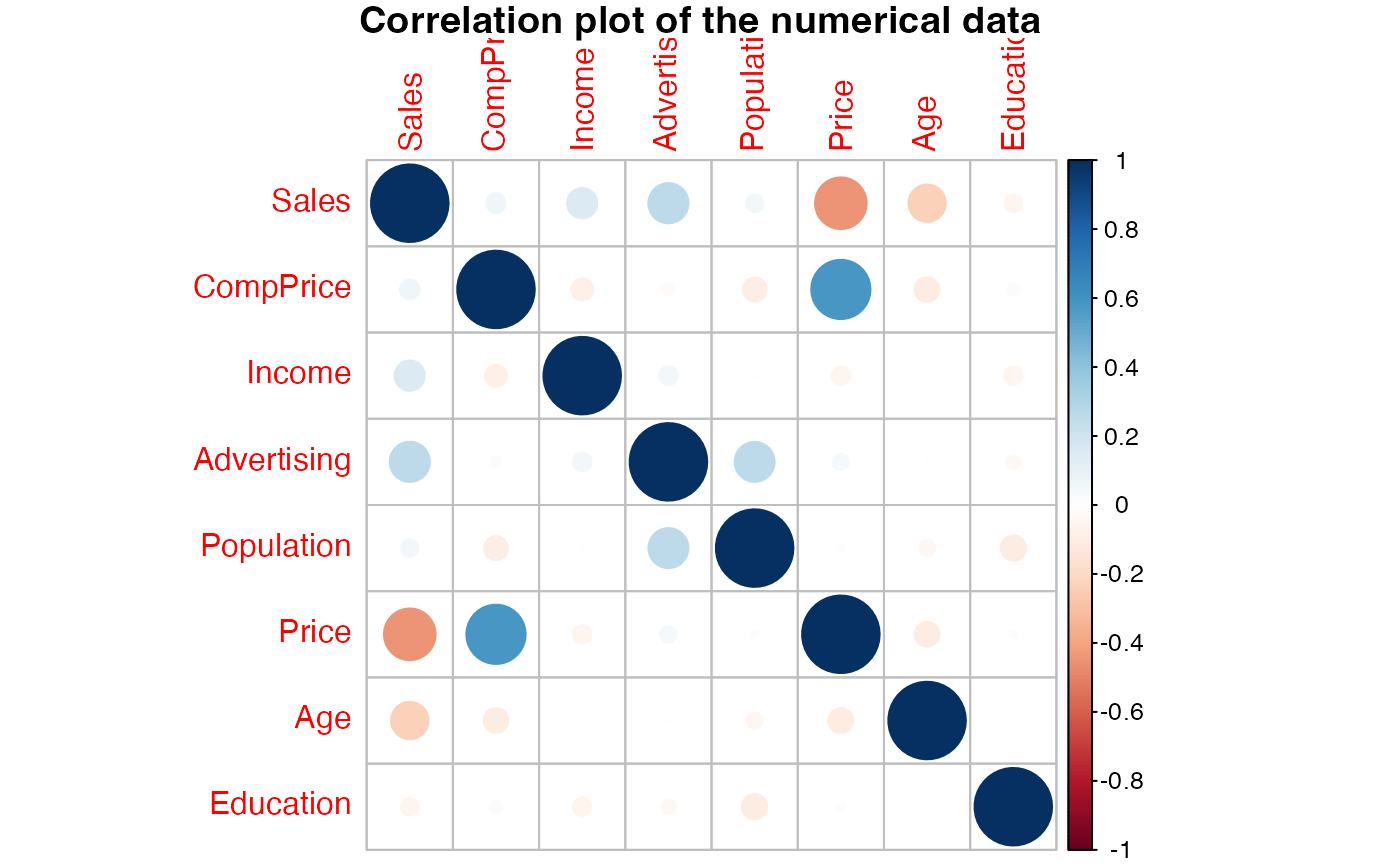

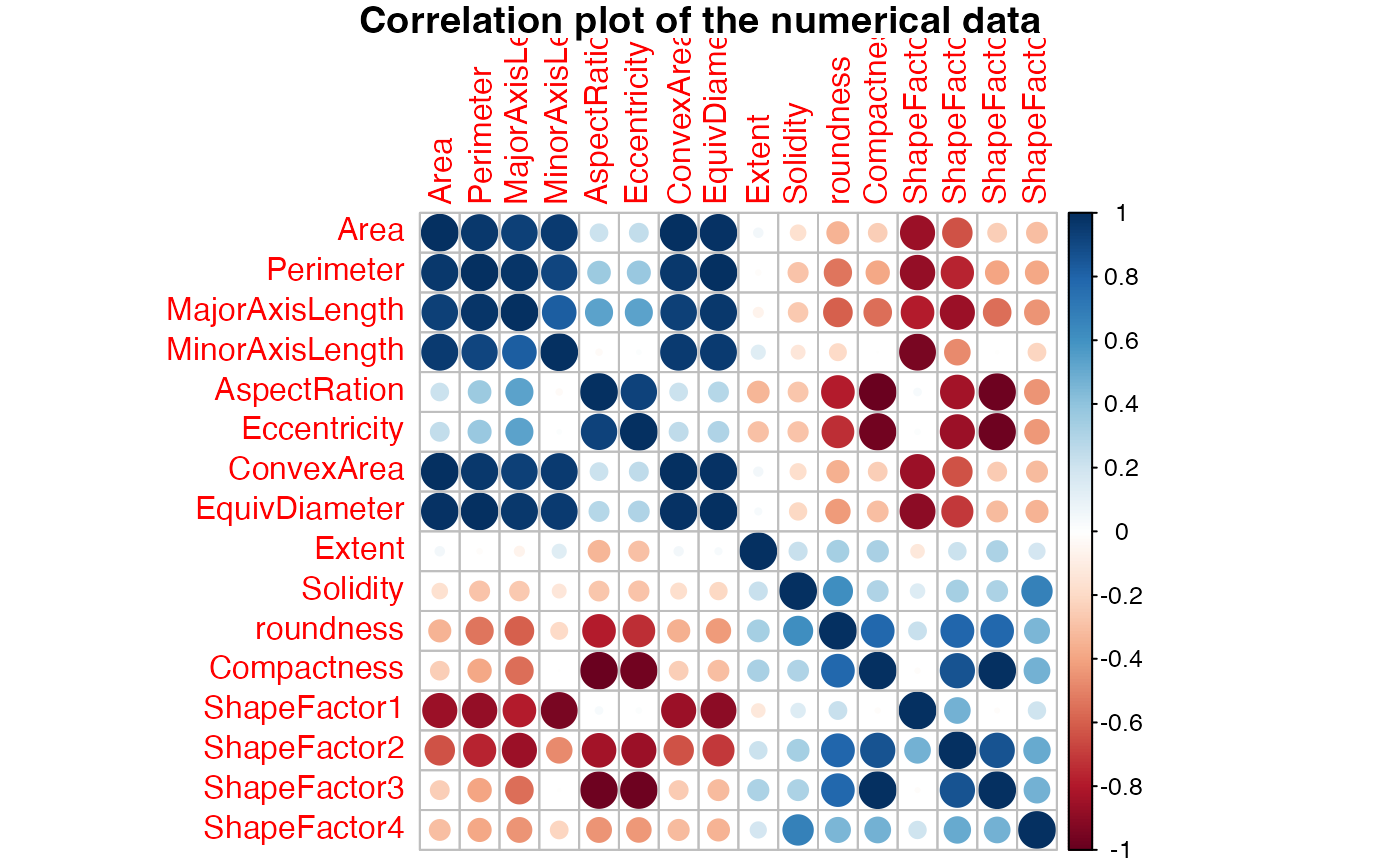

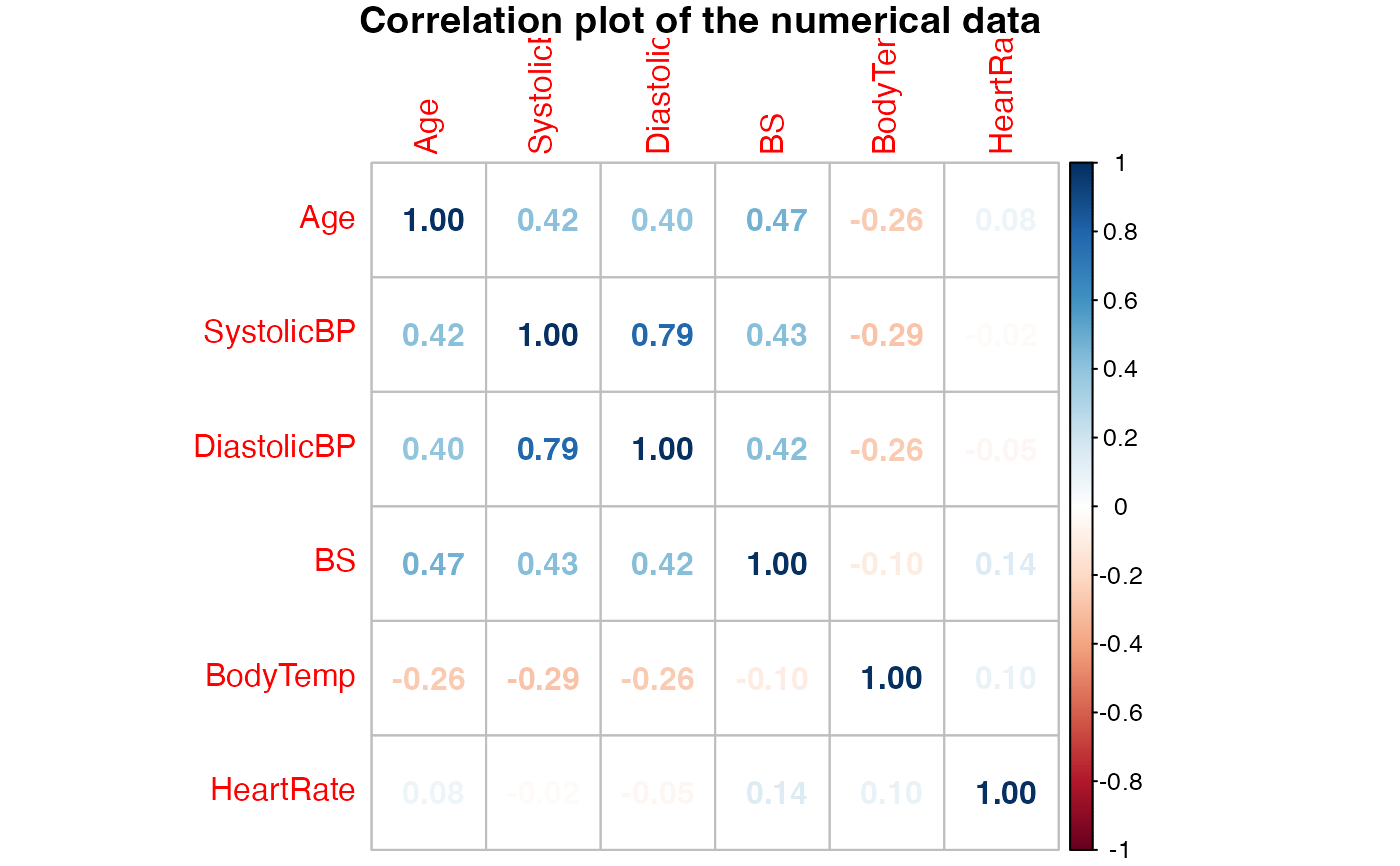

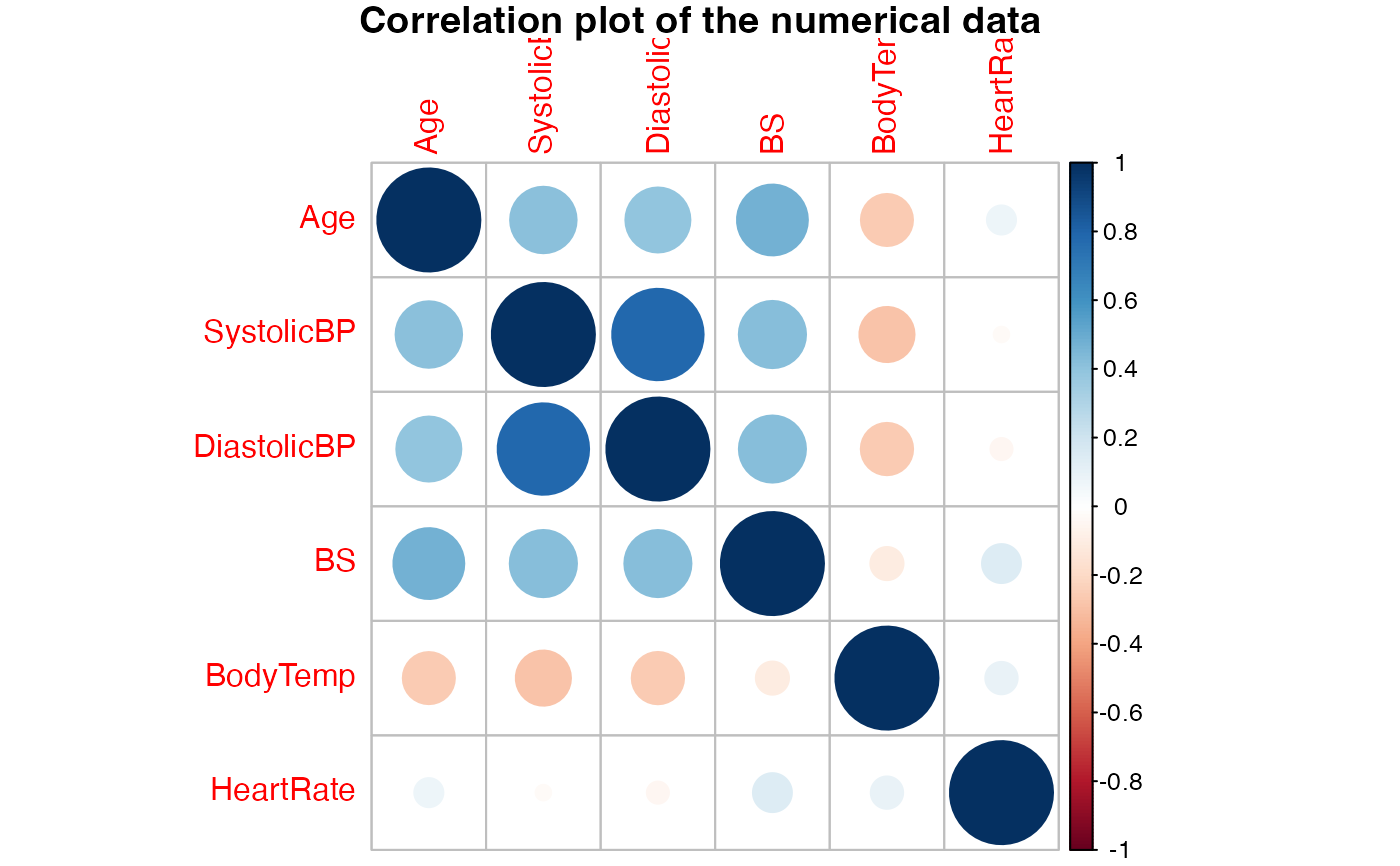

#> $Correlation_matrix

#>

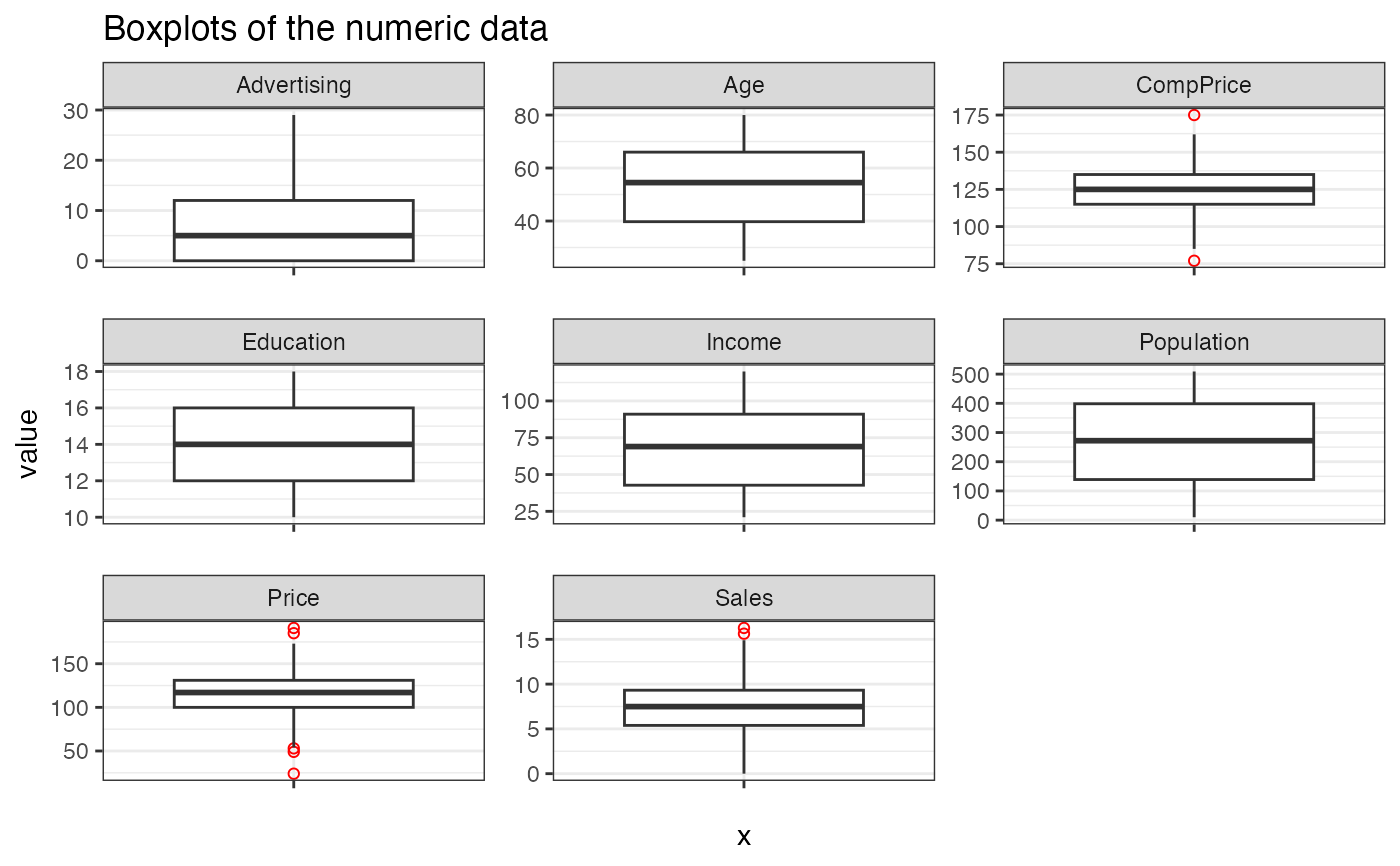

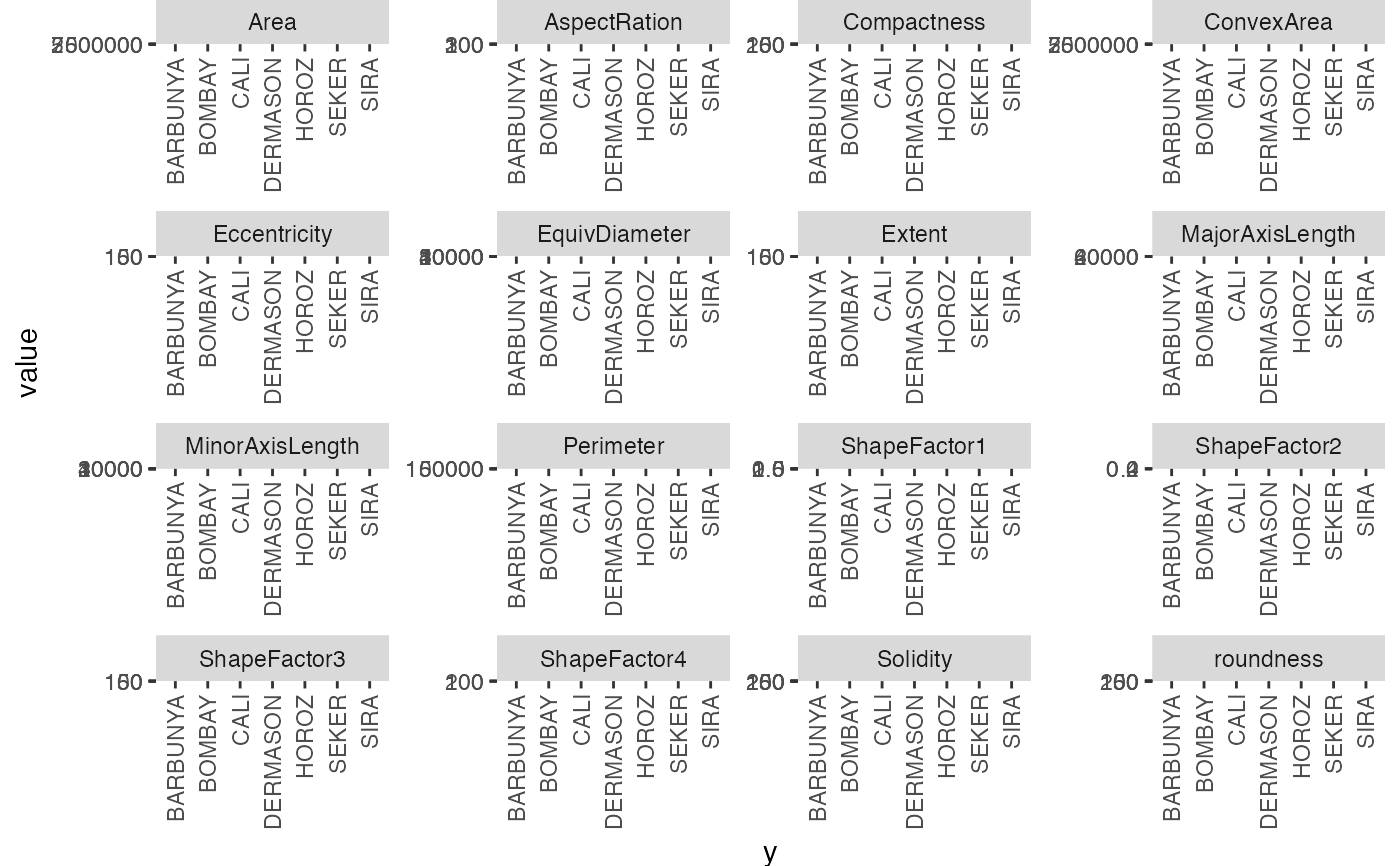

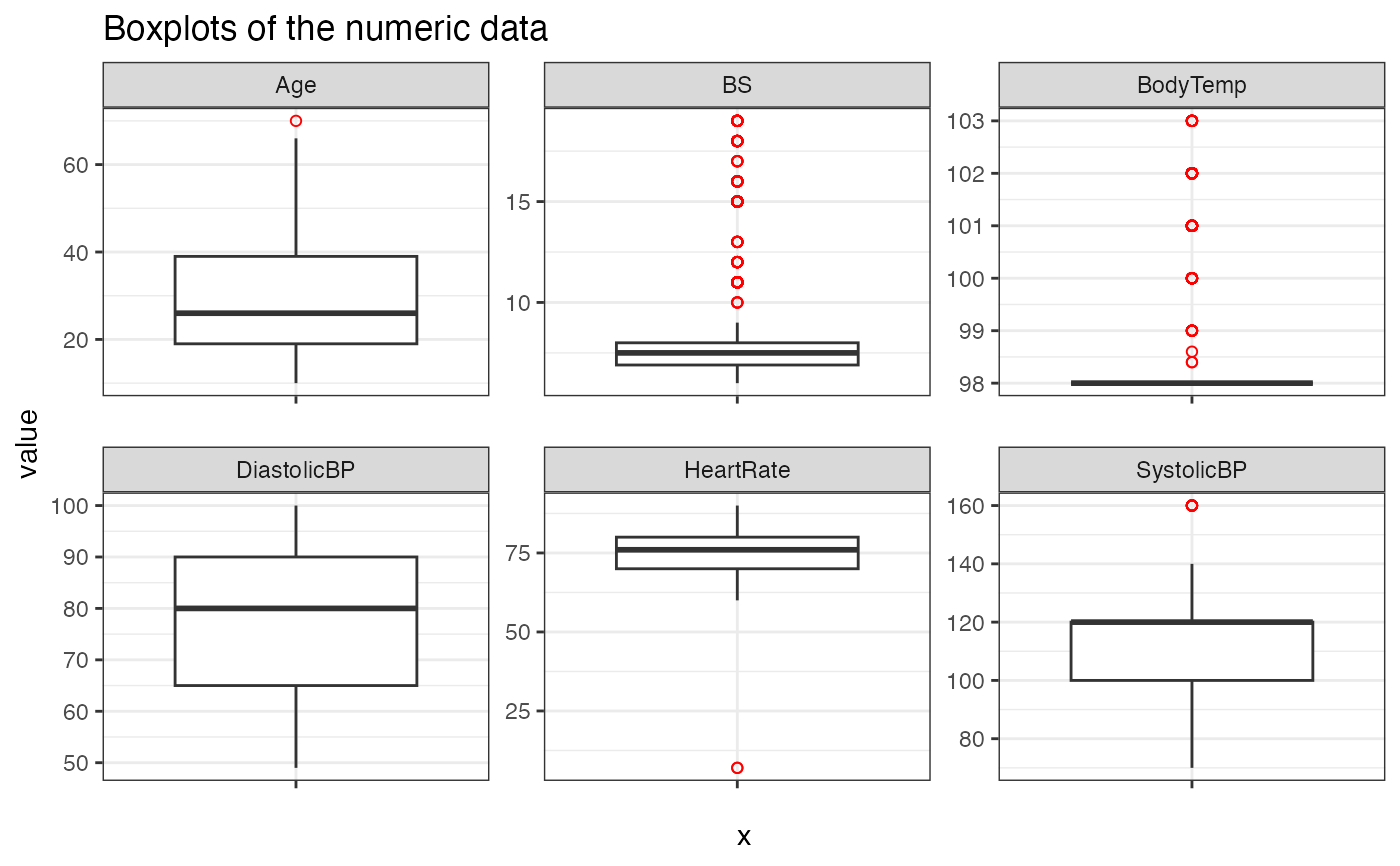

#> $Boxplots

#>

#> $Data_summary

#> Sales CompPrice Income Advertising

#> Min. : 0.000 Min. : 77 Min. : 21.00 Min. : 0.000

#> 1st Qu.: 5.390 1st Qu.:115 1st Qu.: 42.75 1st Qu.: 0.000

#> Median : 7.490 Median :125 Median : 69.00 Median : 5.000

#> Mean : 7.496 Mean :125 Mean : 68.66 Mean : 6.635

#> 3rd Qu.: 9.320 3rd Qu.:135 3rd Qu.: 91.00 3rd Qu.:12.000

#> Max. :16.270 Max. :175 Max. :120.00 Max. :29.000

#> Population Price Age Education Urban

#> Min. : 10.0 Min. : 24.0 Min. :25.00 Min. :10.0 No :118

#> 1st Qu.:139.0 1st Qu.:100.0 1st Qu.:39.75 1st Qu.:12.0 Yes:282

#> Median :272.0 Median :117.0 Median :54.50 Median :14.0

#> Mean :264.8 Mean :115.8 Mean :53.32 Mean :13.9

#> 3rd Qu.:398.5 3rd Qu.:131.0 3rd Qu.:66.00 3rd Qu.:16.0

#> Max. :509.0 Max. :191.0 Max. :80.00 Max. :18.0

#> US y

#> No :142 Bad : 96

#> Yes:258 Good : 85

#> Medium:219

#>

#>

#>

#>

#> $Correlation_matrix

#>

#> $Boxplots

#>

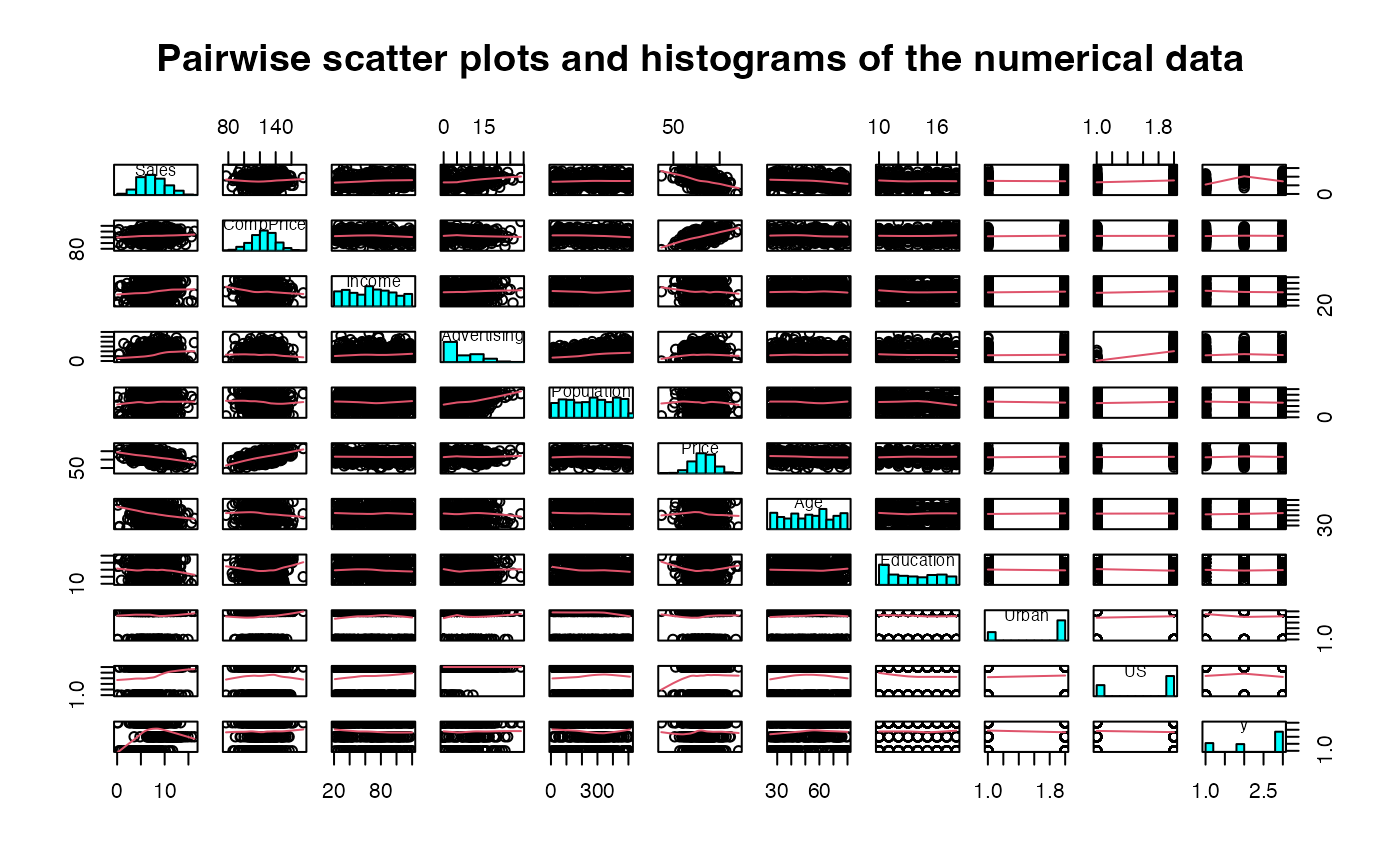

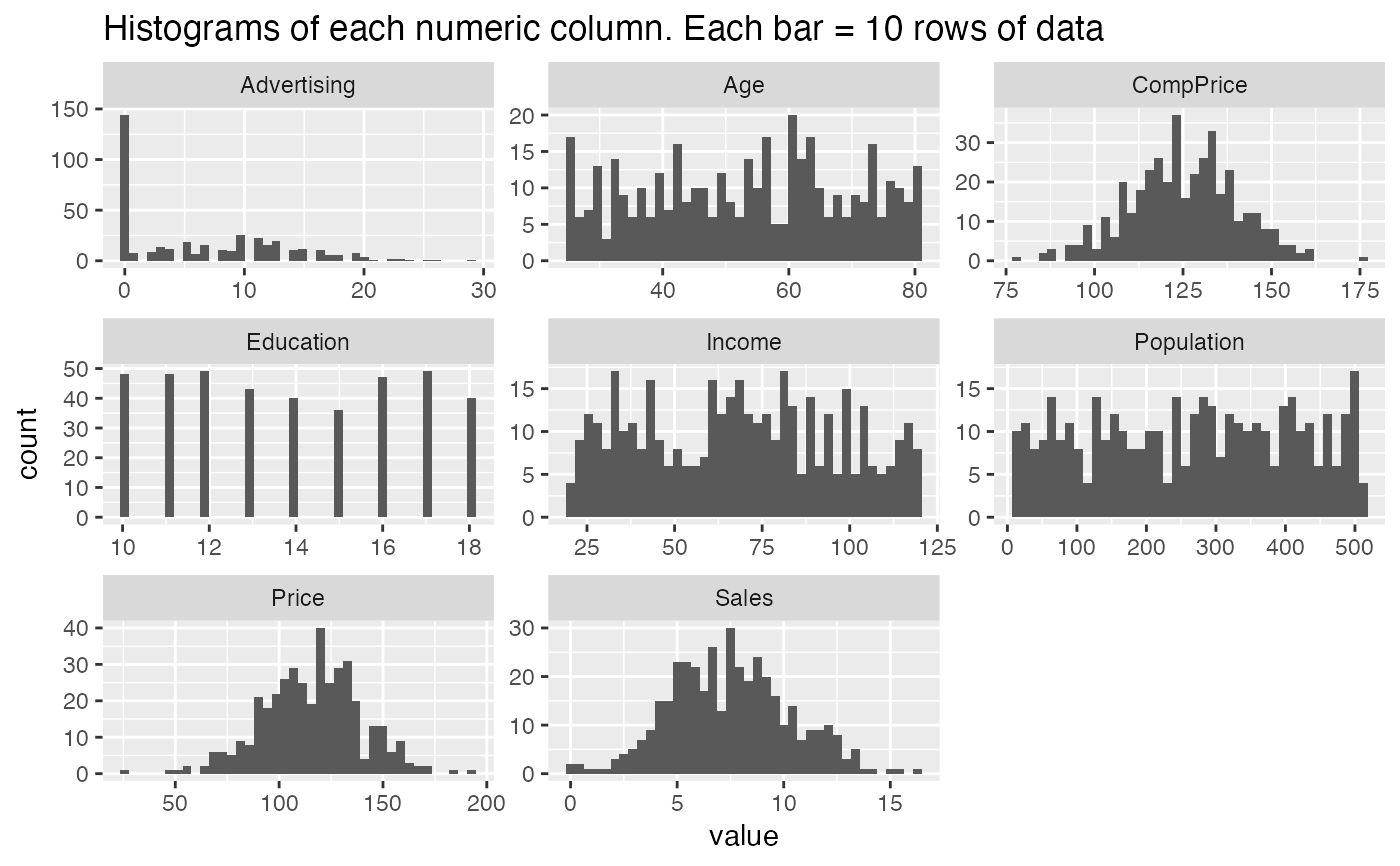

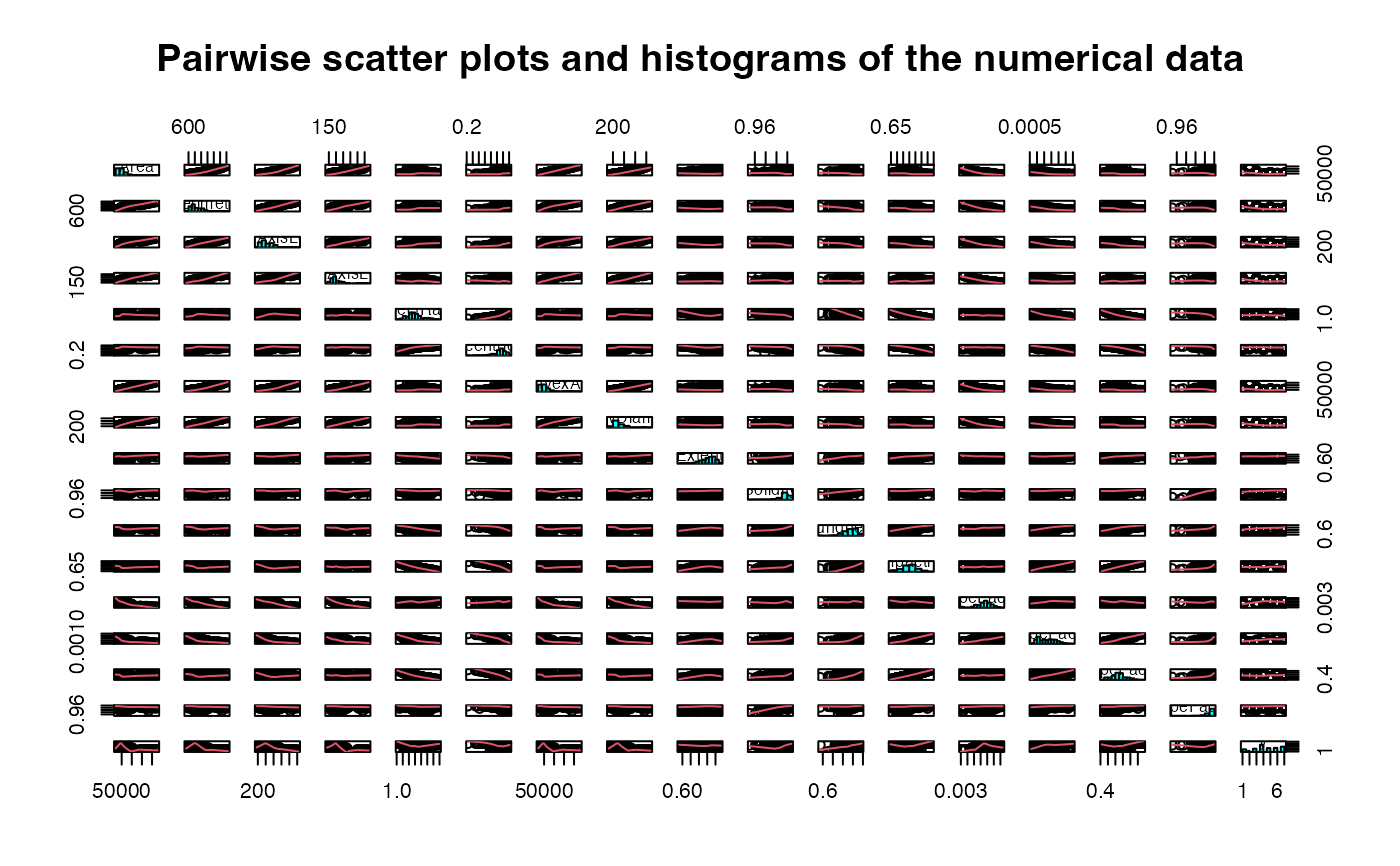

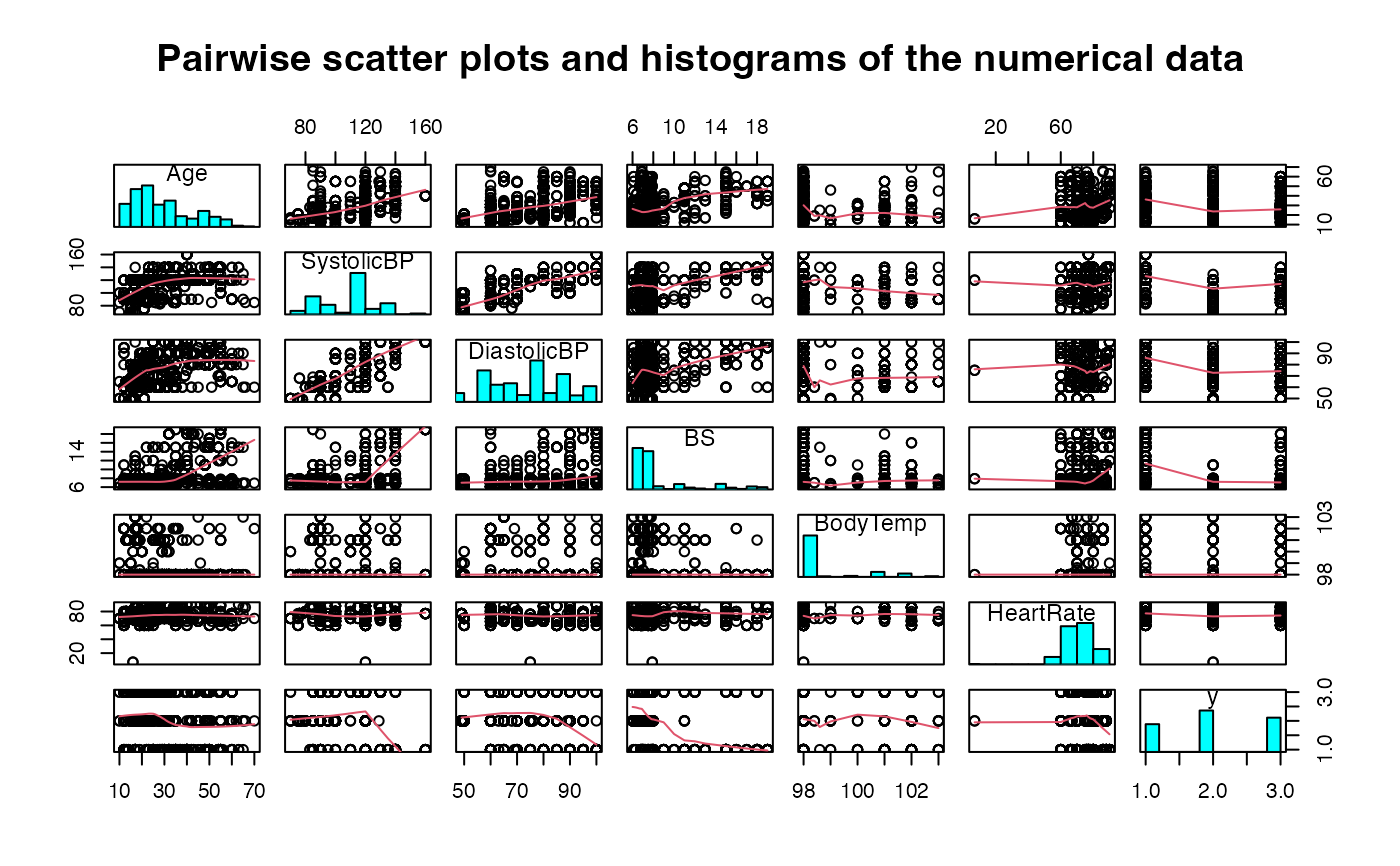

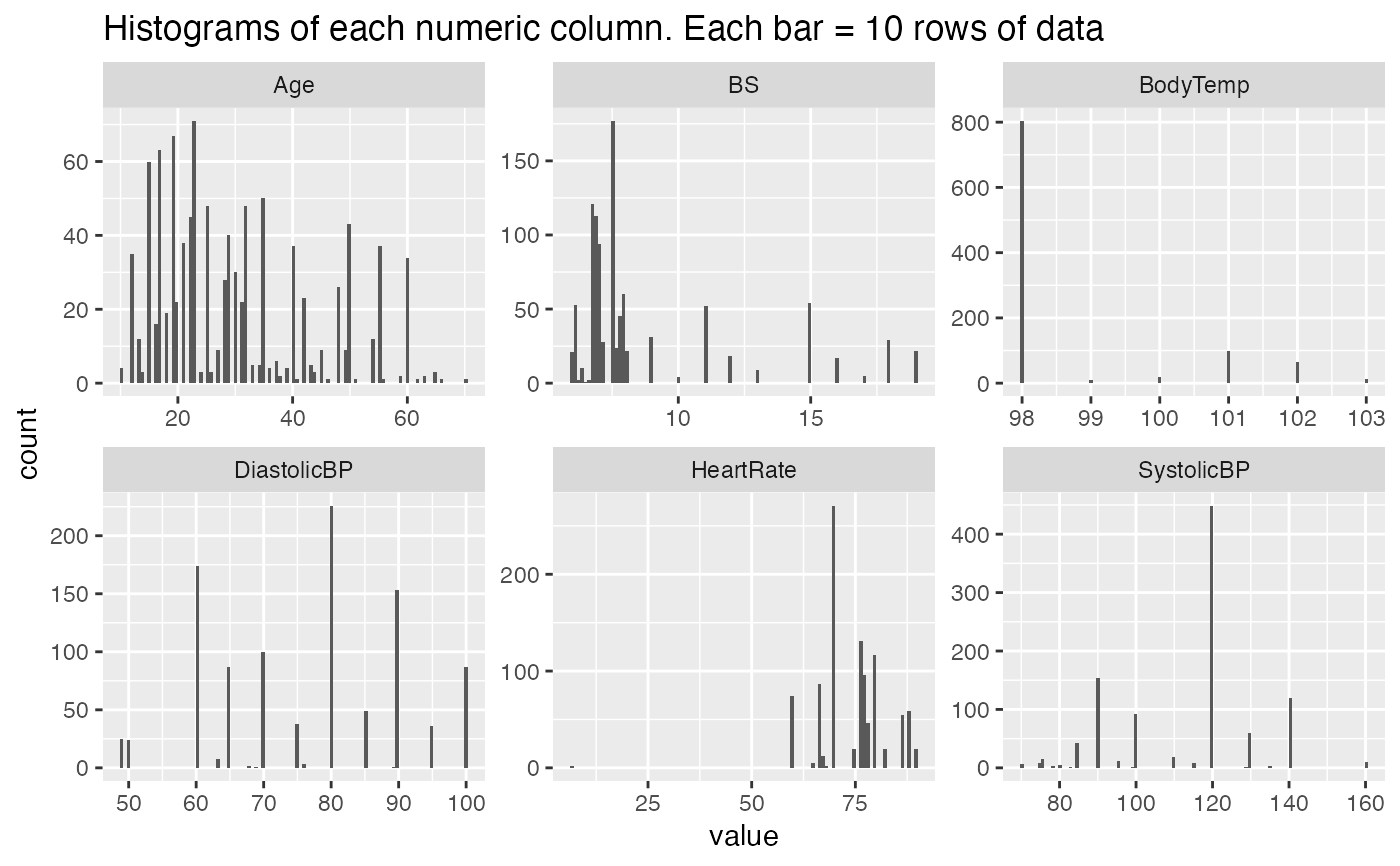

#> $Histograms

#>

#> $Histograms

#>

#> $Head_of_data

#>

#> $Head_of_ensemble

#>

#> $Summary_tables

#> $Summary_tables$ADABag

#> y_test

#> adabag_test_pred Bad Good Medium

#> Bad 37 0 31

#> Good 0 38 10

#> Medium 43 37 123

#>

#> $Summary_tables$Bagging

#> y_test

#> bagging_test_pred Bad Good Medium

#> Bad 35 0 31

#> Good 1 36 8

#> Medium 44 39 125

#>

#> $Summary_tables$`Bagged Random Forest`

#> y_test

#> bag_rf_test_pred Bad Good Medium

#> Bad 40 0 32

#> Good 0 39 8

#> Medium 40 36 124

#>

#> $Summary_tables$C50

#> y_test

#> C50_test_pred Bad Good Medium

#> Bad 43 4 49

#> Good 2 35 17

#> Medium 35 36 98

#>

#> $Summary_tables$Linear

#> y_test

#> linear_test_pred Bad Good Medium

#> Bad 30 0 9

#> Good 0 48 1

#> Medium 50 27 154

#>

#> $Summary_tables$`Naive Bayes`

#> y_test

#> n_bayes_test_pred Bad Good Medium

#> Bad 22 1 12

#> Good 6 29 15

#> Medium 52 45 137

#>

#> $Summary_tables$`Partial Least Sqaures`

#> y_test

#> pls_test_predict Bad Good Medium

#> Bad 0 0 0

#> Good 0 0 0

#> Medium 80 75 164

#>

#> $Summary_tables$`Penalized Discriminant Ananysis`

#> y_test

#> pda_test_predict Bad Good Medium

#> Bad 57 0 22

#> Good 0 58 4

#> Medium 23 17 138

#>

#> $Summary_tables$`Random Forest`

#> y_test

#> rf_test_pred Bad Good Medium

#> Bad 30 0 22

#> Good 1 34 10

#> Medium 49 41 132

#>

#> $Summary_tables$Ranger

#> y_test

#> ranger_test_predict Bad Good Medium

#> Bad 29 0 18

#> Good 0 33 8

#> Medium 51 42 138

#>

#> $Summary_tables$`Regularized Discriminant Analysis`

#> y_test

#> Bad Good Medium

#> Bad 27 0 11

#> Good 0 27 2

#> Medium 53 48 151

#>

#> $Summary_tables$RPart

#> y_test

#> rpart_test_predict Bad Good Medium

#> Bad 33 1 35

#> Good 1 29 9

#> Medium 46 45 120

#>

#> $Summary_tables$`Support Vector Machines`

#> y_test

#> svm_test_pred Bad Good Medium

#> Bad 1 0 0

#> Good 0 0 0

#> Medium 79 75 164

#>

#> $Summary_tables$Trees

#> y_test

#> tree_test_pred Bad Good Medium

#> Bad 49 2 47

#> Good 2 39 18

#> Medium 29 34 99

#>

#> $Summary_tables$XGBoost

#>

#> Bad Good Medium

#> Bad 33 1 23

#> Good 2 32 9

#> Medium 45 42 132

#>

#> $Summary_tables$`Ensemble ADABag`

#> ensemble_y_test

#> ensemble_adabag_test_pred Bad Good Medium

#> Bad 7 8 11

#> Good 2 0 1

#> Medium 25 25 54

#>

#> $Summary_tables$`Ensemble Bagged Cart`

#>

#> ensemble_bag_cart_test_pred Bad Good Medium

#> Bad 6 5 9

#> Good 2 1 3

#> Medium 26 27 54

#>

#> $Summary_tables$`Ensemble Bagged Random Forest`

#>

#> ensemble_bag_rf_test_pred Bad Good Medium

#> Bad 34 0 0

#> Good 0 33 0

#> Medium 0 0 66

#>

#> $Summary_tables$`Ensemble C50`

#> ensemble_y_test

#> ensemble_C50_test_pred Bad Good Medium

#> Bad 34 0 0

#> Good 0 33 0

#> Medium 0 0 66

#>

#> $Summary_tables$`Ensemble Naive Bayes`

#> ensemble_y_test

#> ensemble_n_bayes_test_pred Bad Good Medium

#> Bad 28 1 0

#> Good 0 29 0

#> Medium 6 3 66

#>

#> $Summary_tables$`Ensemble Ranger`

#> ensemble_y_test

#> ensemble_ranger_test_pred Bad Good Medium

#> Bad 3 6 6

#> Good 2 0 1

#> Medium 29 27 59

#>

#> $Summary_tables$`Ensemble Random Forest`

#> ensemble_y_test

#> ensemble_rf_test_pred Bad Good Medium

#> Bad 30 1 0

#> Good 0 30 0

#> Medium 4 2 66

#>

#> $Summary_tables$`Ensemble Regularized Discriminant Analysis`

#> ensemble_y_test

#> Bad Good Medium

#> Bad 34 0 0

#> Good 0 33 0

#> Medium 0 0 66

#>

#> $Summary_tables$`Ensemble Support Vector Machines`

#> ensemble_y_test

#> ensemble_svm_test_pred Bad Good Medium

#> Bad 15 0 0

#> Good 0 17 0

#> Medium 19 16 66

#>

#> $Summary_tables$`Ensemble Trees`

#> ensemble_y_test

#> ensemble_tree_test_pred Bad Good Medium

#> Bad 12 7 16

#> Good 2 1 1

#> Medium 20 25 49

#>

#>

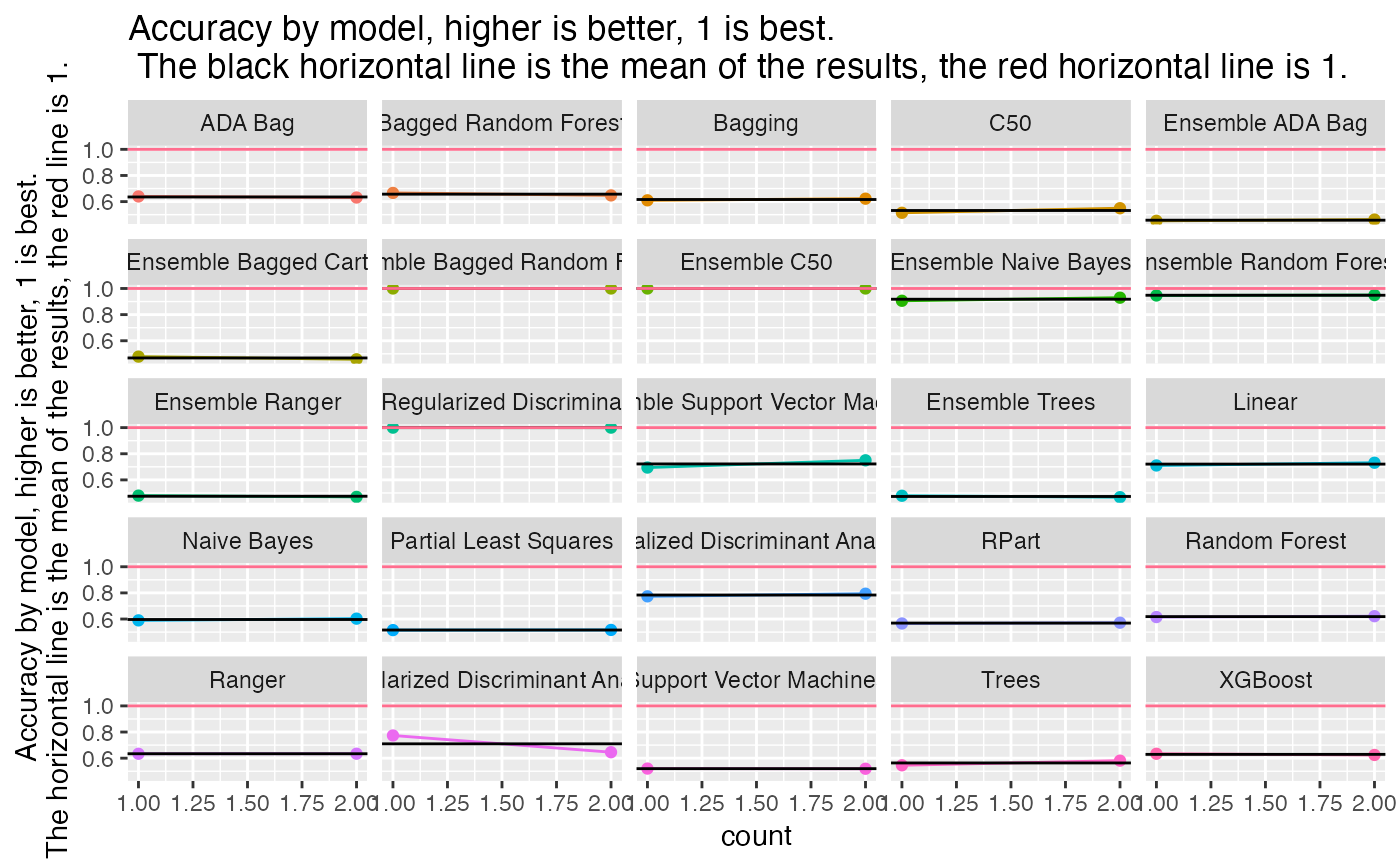

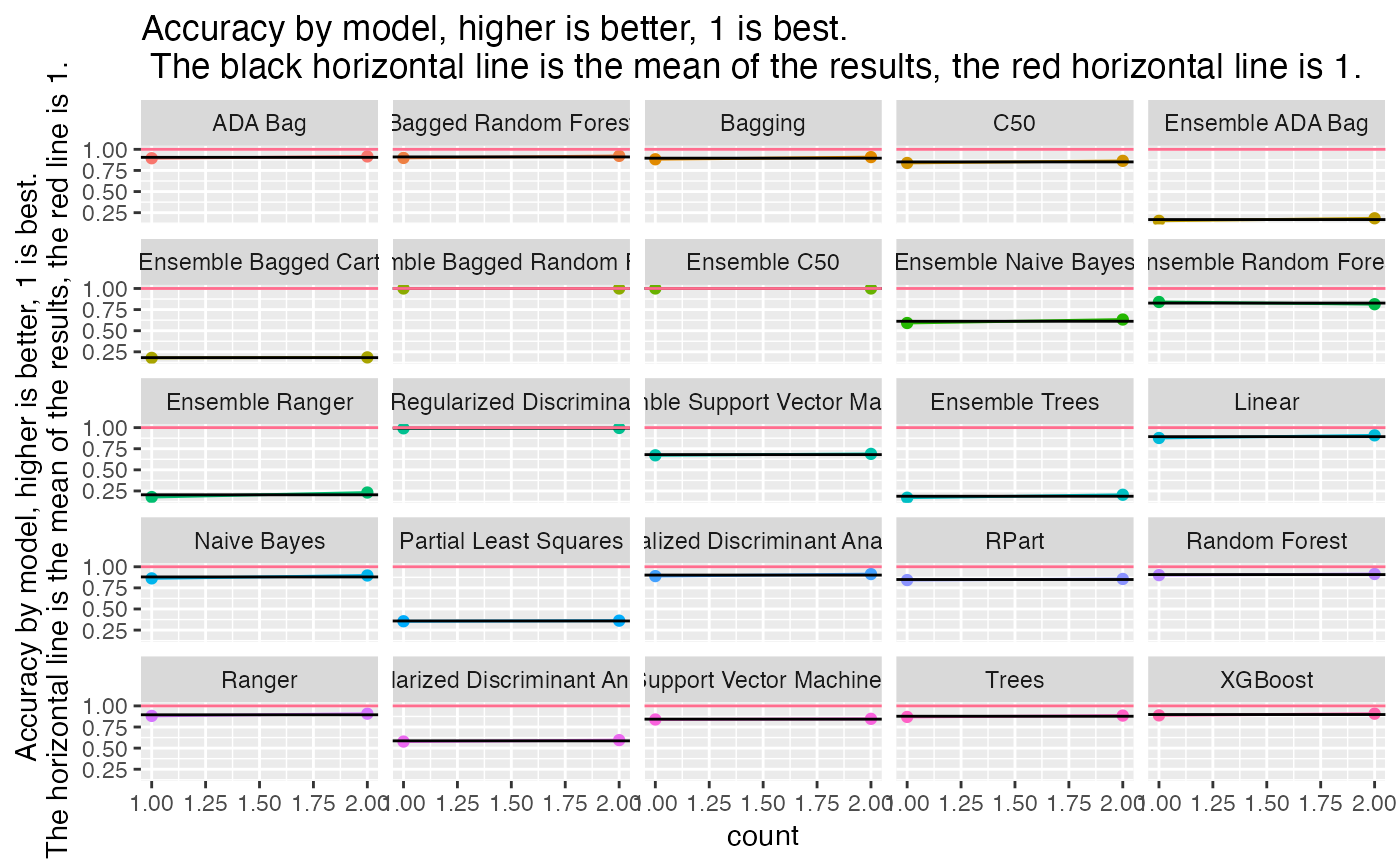

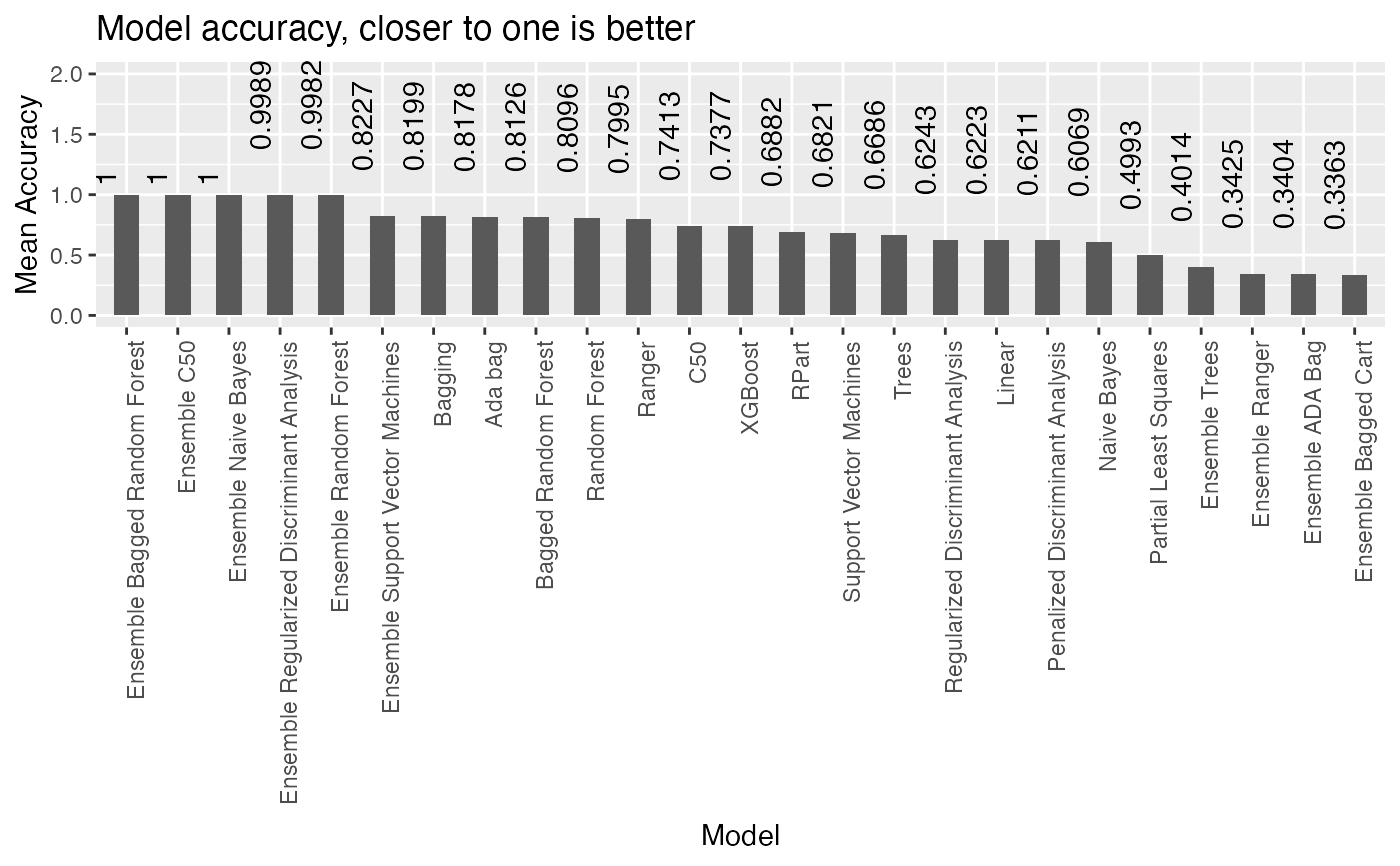

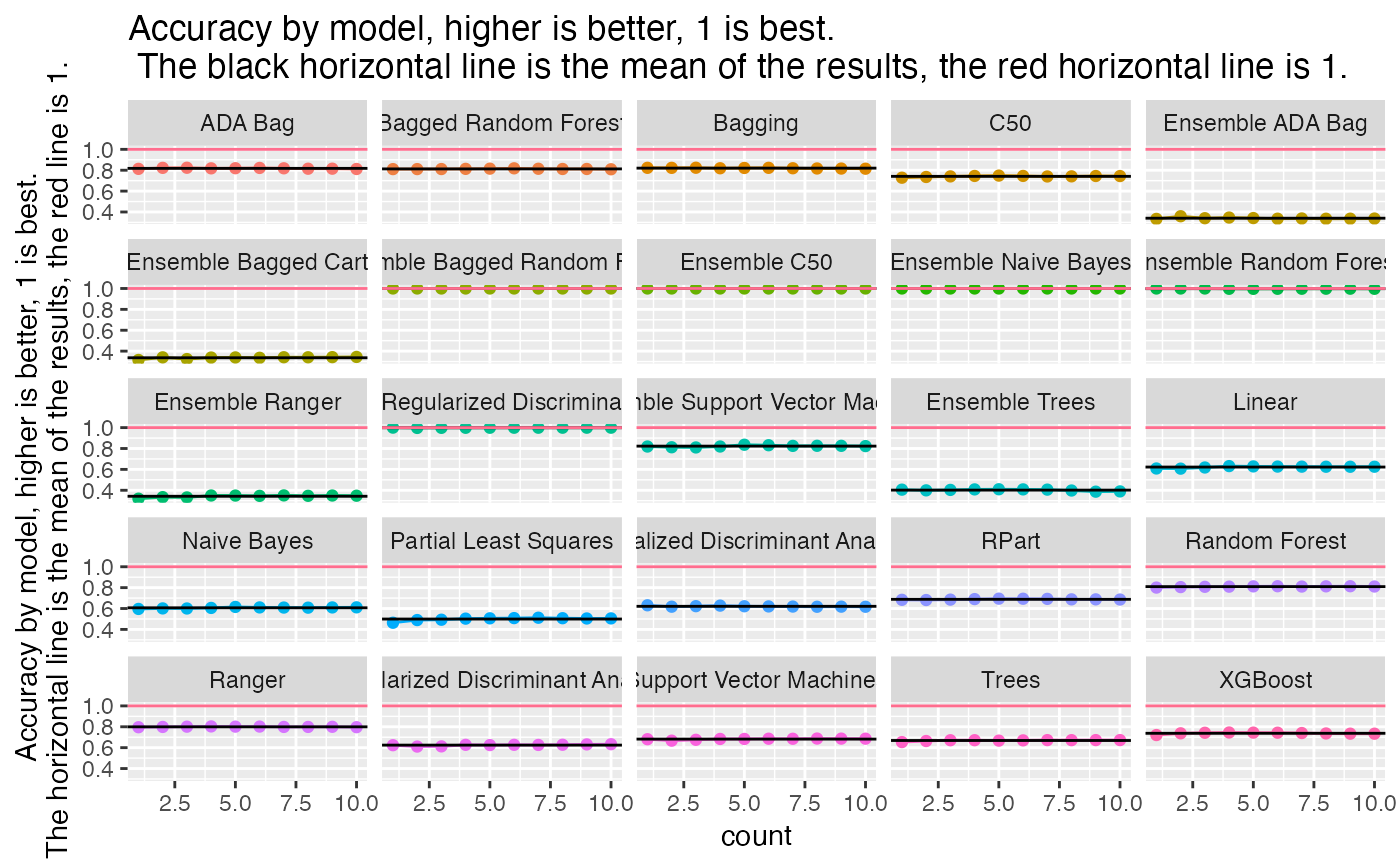

#> $Accuracy_plot

#>

#> $Head_of_data

#>

#> $Head_of_ensemble

#>

#> $Summary_tables

#> $Summary_tables$ADABag

#> y_test

#> adabag_test_pred Bad Good Medium

#> Bad 37 0 31

#> Good 0 38 10

#> Medium 43 37 123

#>

#> $Summary_tables$Bagging

#> y_test

#> bagging_test_pred Bad Good Medium

#> Bad 35 0 31

#> Good 1 36 8

#> Medium 44 39 125

#>

#> $Summary_tables$`Bagged Random Forest`

#> y_test

#> bag_rf_test_pred Bad Good Medium

#> Bad 40 0 32

#> Good 0 39 8

#> Medium 40 36 124

#>

#> $Summary_tables$C50

#> y_test

#> C50_test_pred Bad Good Medium

#> Bad 43 4 49

#> Good 2 35 17

#> Medium 35 36 98

#>

#> $Summary_tables$Linear

#> y_test

#> linear_test_pred Bad Good Medium

#> Bad 30 0 9

#> Good 0 48 1

#> Medium 50 27 154

#>

#> $Summary_tables$`Naive Bayes`

#> y_test

#> n_bayes_test_pred Bad Good Medium

#> Bad 22 1 12

#> Good 6 29 15

#> Medium 52 45 137

#>

#> $Summary_tables$`Partial Least Sqaures`

#> y_test

#> pls_test_predict Bad Good Medium

#> Bad 0 0 0

#> Good 0 0 0

#> Medium 80 75 164

#>

#> $Summary_tables$`Penalized Discriminant Ananysis`

#> y_test

#> pda_test_predict Bad Good Medium

#> Bad 57 0 22

#> Good 0 58 4

#> Medium 23 17 138

#>

#> $Summary_tables$`Random Forest`

#> y_test

#> rf_test_pred Bad Good Medium

#> Bad 30 0 22

#> Good 1 34 10

#> Medium 49 41 132

#>

#> $Summary_tables$Ranger

#> y_test

#> ranger_test_predict Bad Good Medium

#> Bad 29 0 18

#> Good 0 33 8

#> Medium 51 42 138

#>

#> $Summary_tables$`Regularized Discriminant Analysis`

#> y_test

#> Bad Good Medium

#> Bad 27 0 11

#> Good 0 27 2

#> Medium 53 48 151

#>

#> $Summary_tables$RPart

#> y_test

#> rpart_test_predict Bad Good Medium

#> Bad 33 1 35

#> Good 1 29 9

#> Medium 46 45 120

#>

#> $Summary_tables$`Support Vector Machines`

#> y_test

#> svm_test_pred Bad Good Medium

#> Bad 1 0 0

#> Good 0 0 0

#> Medium 79 75 164

#>

#> $Summary_tables$Trees

#> y_test

#> tree_test_pred Bad Good Medium

#> Bad 49 2 47

#> Good 2 39 18

#> Medium 29 34 99

#>

#> $Summary_tables$XGBoost

#>

#> Bad Good Medium

#> Bad 33 1 23

#> Good 2 32 9

#> Medium 45 42 132

#>

#> $Summary_tables$`Ensemble ADABag`

#> ensemble_y_test

#> ensemble_adabag_test_pred Bad Good Medium

#> Bad 7 8 11

#> Good 2 0 1

#> Medium 25 25 54

#>

#> $Summary_tables$`Ensemble Bagged Cart`

#>

#> ensemble_bag_cart_test_pred Bad Good Medium

#> Bad 6 5 9

#> Good 2 1 3

#> Medium 26 27 54

#>

#> $Summary_tables$`Ensemble Bagged Random Forest`

#>

#> ensemble_bag_rf_test_pred Bad Good Medium

#> Bad 34 0 0

#> Good 0 33 0

#> Medium 0 0 66

#>

#> $Summary_tables$`Ensemble C50`

#> ensemble_y_test

#> ensemble_C50_test_pred Bad Good Medium

#> Bad 34 0 0

#> Good 0 33 0

#> Medium 0 0 66

#>

#> $Summary_tables$`Ensemble Naive Bayes`

#> ensemble_y_test

#> ensemble_n_bayes_test_pred Bad Good Medium

#> Bad 28 1 0

#> Good 0 29 0

#> Medium 6 3 66

#>

#> $Summary_tables$`Ensemble Ranger`

#> ensemble_y_test

#> ensemble_ranger_test_pred Bad Good Medium

#> Bad 3 6 6

#> Good 2 0 1

#> Medium 29 27 59

#>

#> $Summary_tables$`Ensemble Random Forest`

#> ensemble_y_test

#> ensemble_rf_test_pred Bad Good Medium

#> Bad 30 1 0

#> Good 0 30 0

#> Medium 4 2 66

#>

#> $Summary_tables$`Ensemble Regularized Discriminant Analysis`

#> ensemble_y_test

#> Bad Good Medium

#> Bad 34 0 0

#> Good 0 33 0

#> Medium 0 0 66

#>

#> $Summary_tables$`Ensemble Support Vector Machines`

#> ensemble_y_test

#> ensemble_svm_test_pred Bad Good Medium

#> Bad 15 0 0

#> Good 0 17 0

#> Medium 19 16 66

#>

#> $Summary_tables$`Ensemble Trees`

#> ensemble_y_test

#> ensemble_tree_test_pred Bad Good Medium

#> Bad 12 7 16

#> Good 2 1 1

#> Medium 20 25 49

#>

#>

#> $Accuracy_plot

#>

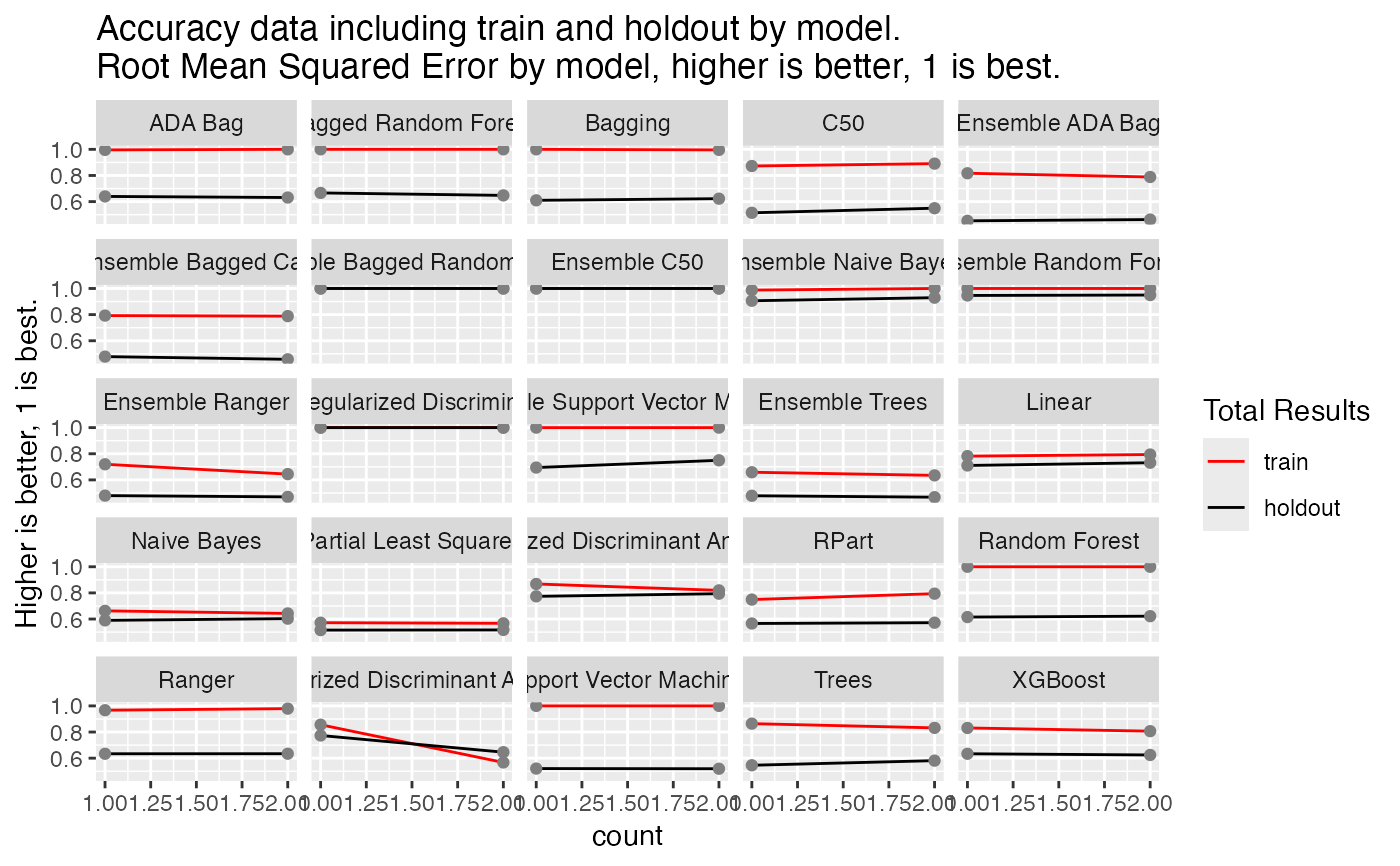

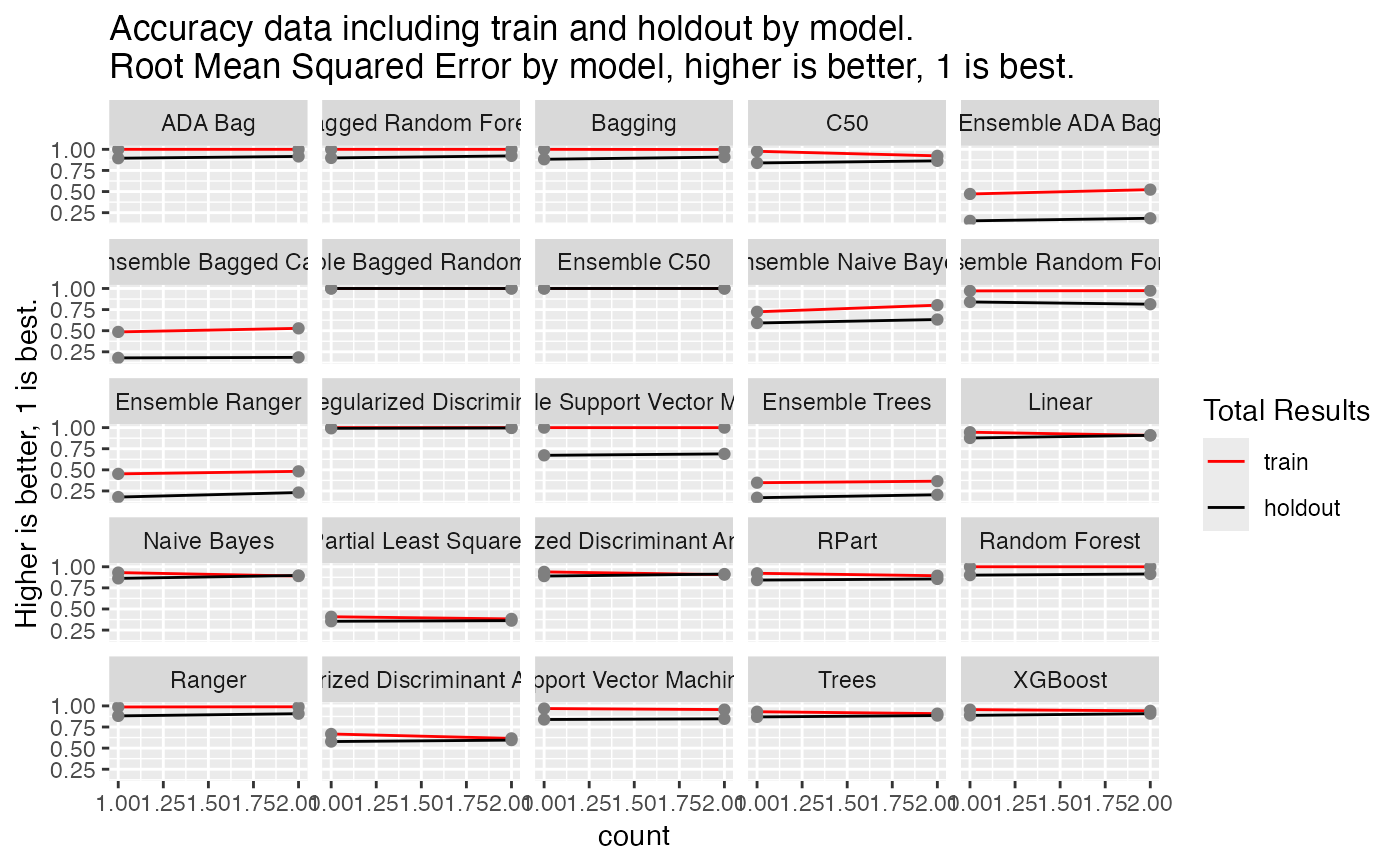

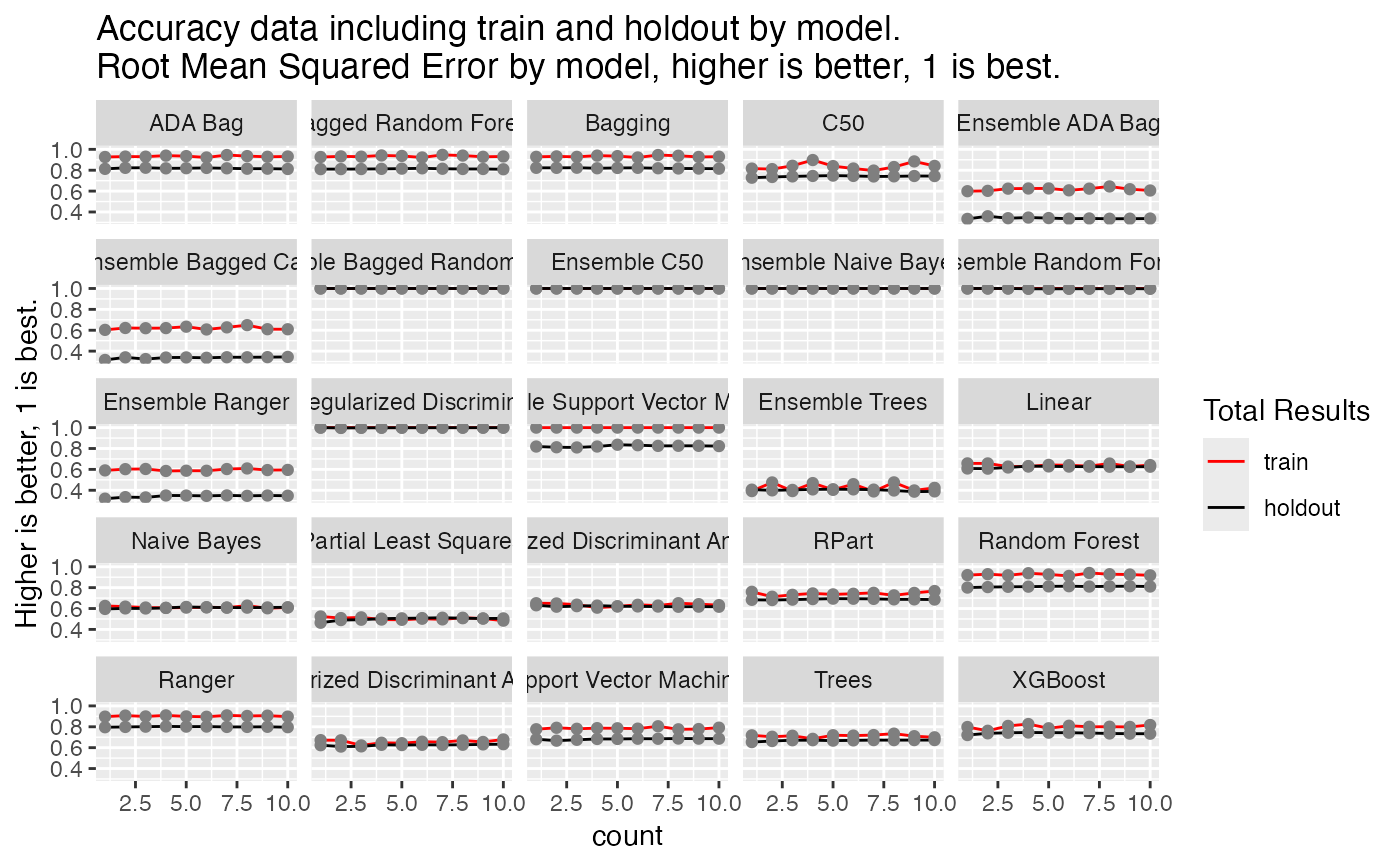

#> $Total_plot

#>

#> $Total_plot

#>

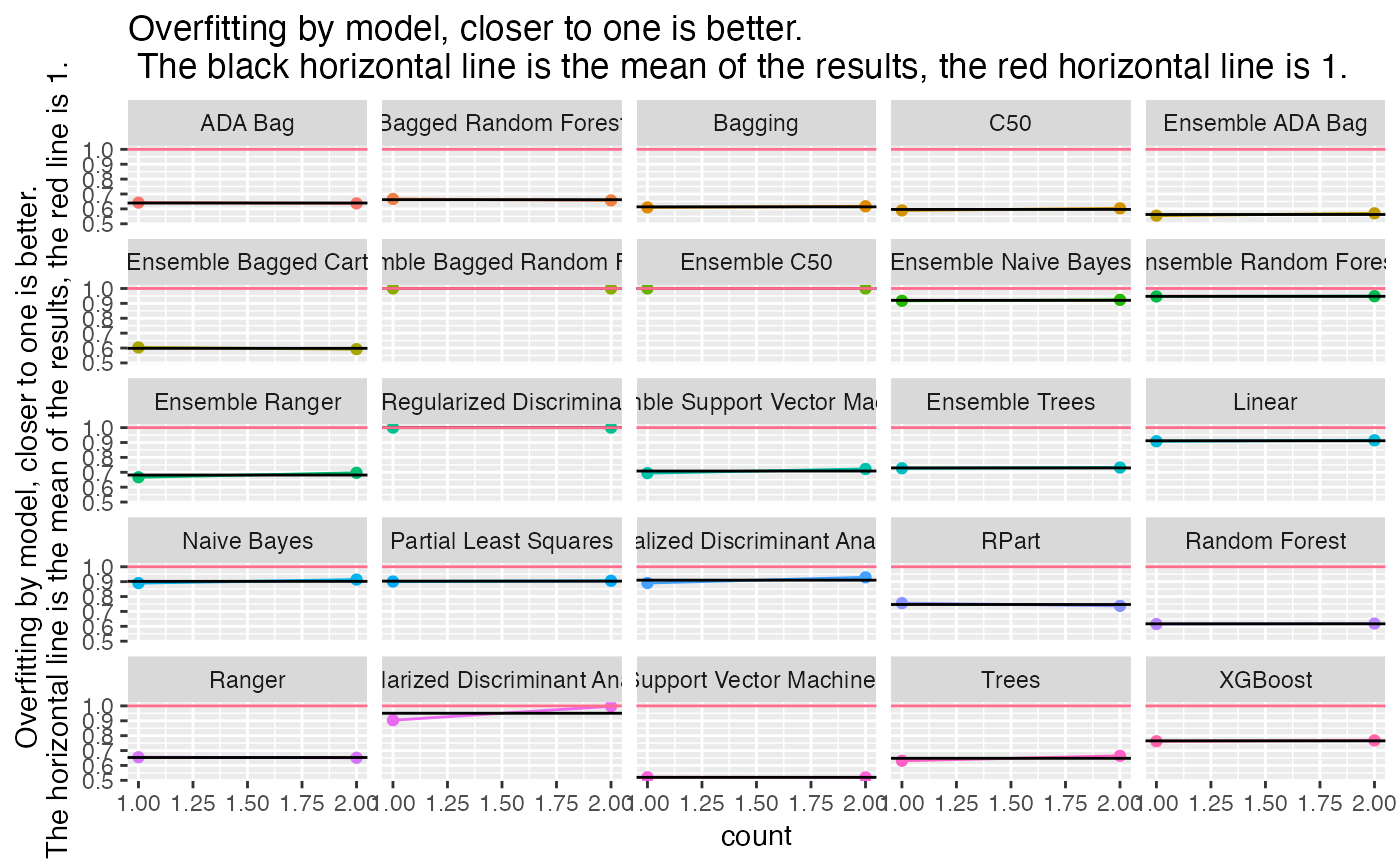

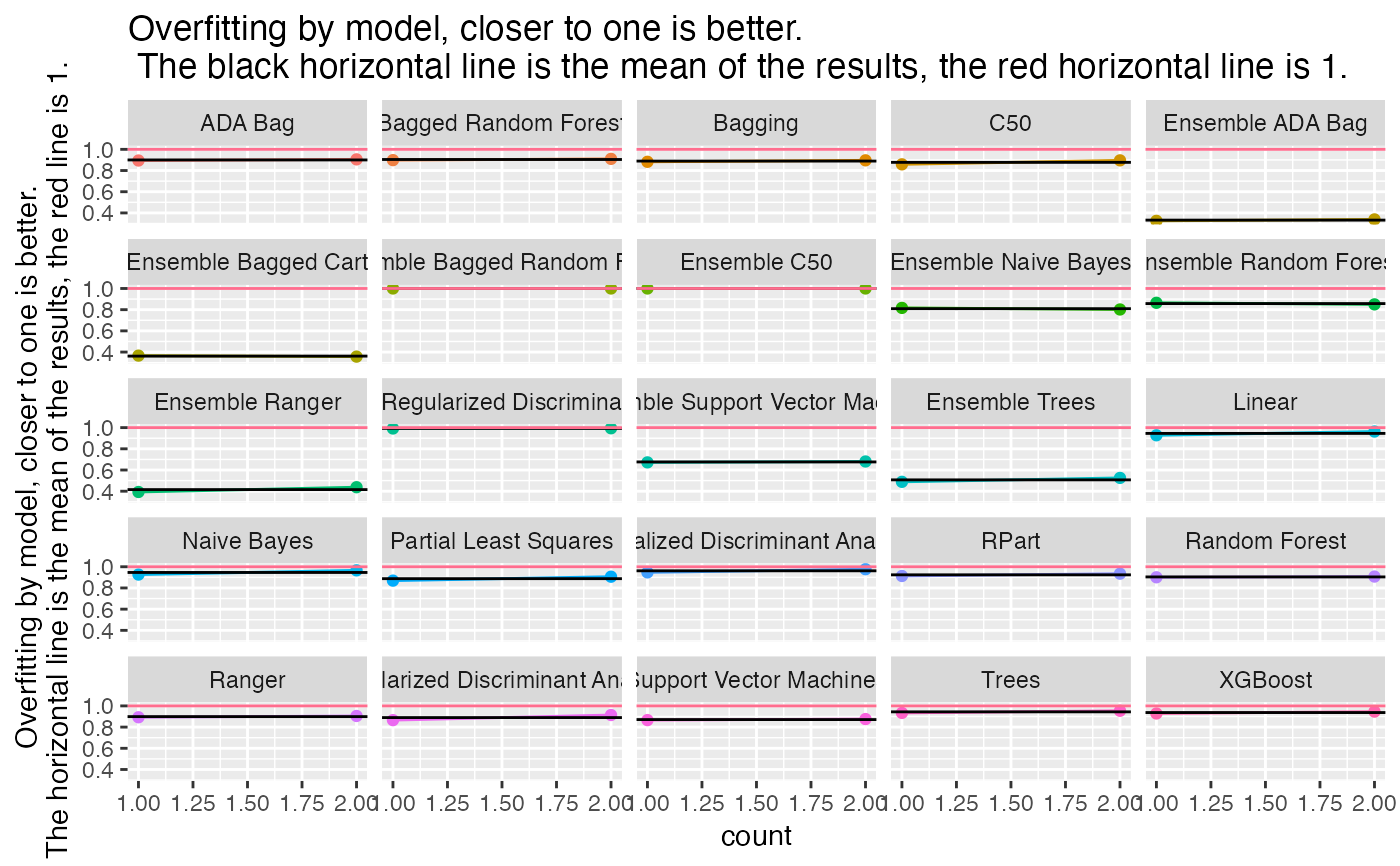

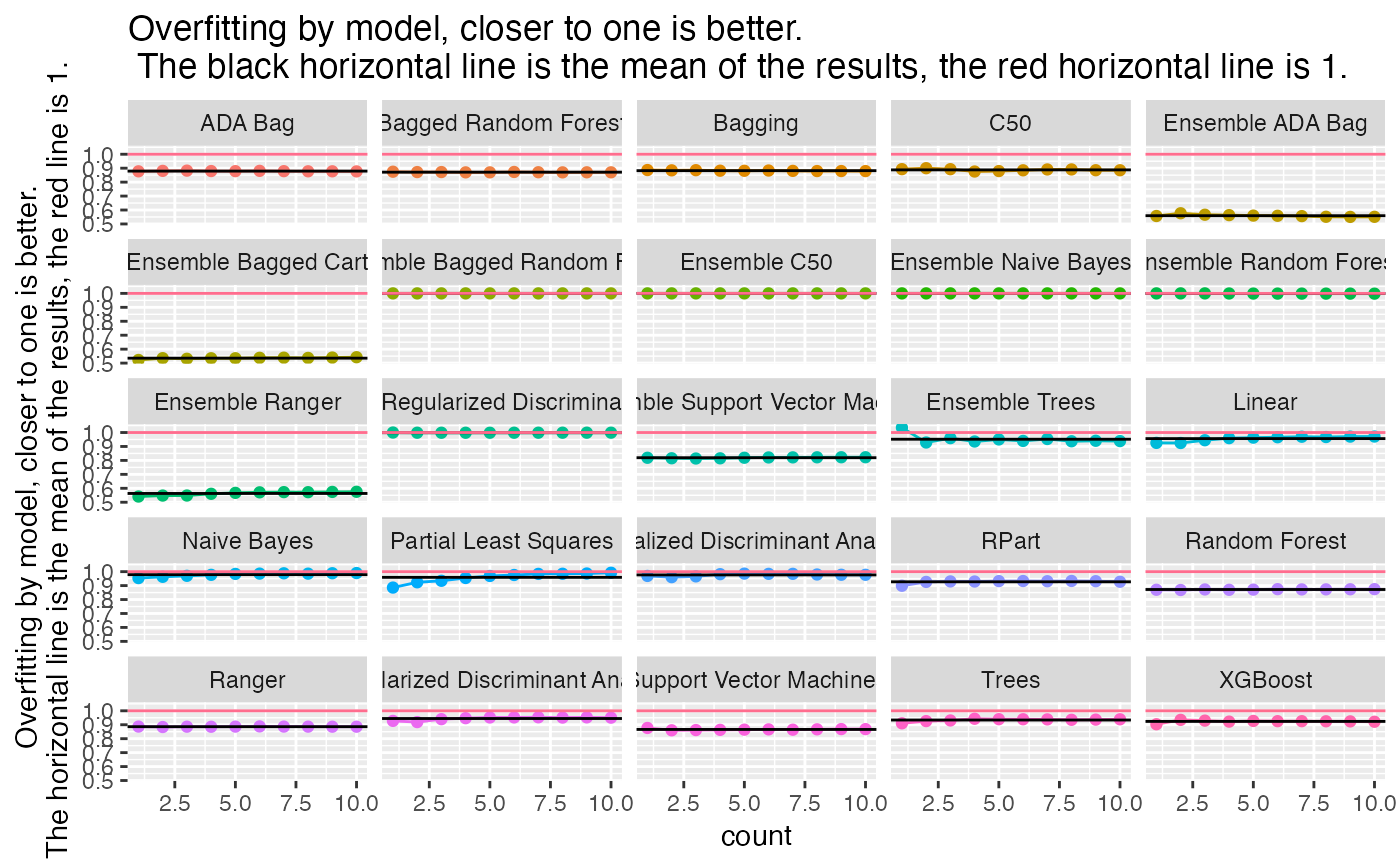

#> $Overfitting_plot

#>

#> $Overfitting_plot

#>

Classification(data = dry_beans_small,

colnum = 17,

numresamples = 2,

do_you_have_new_data = "N",

how_to_handle_strings = 0,

save_all_trained_models = "N",

use_parallel = "N",

train_amount = 0.60,

test_amount = 0.20,

validation_amount = 0.20)

#>

Classification(data = dry_beans_small,

colnum = 17,

numresamples = 2,

do_you_have_new_data = "N",

how_to_handle_strings = 0,

save_all_trained_models = "N",

use_parallel = "N",

train_amount = 0.60,

test_amount = 0.20,

validation_amount = 0.20)

#> [1]

#> [1] "Resampling number 1 of 2,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 2 of 2,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> $Final_results

#>

#> $Barchart

#> [1]

#> [1] "Resampling number 1 of 2,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 2 of 2,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> $Final_results

#>

#> $Barchart

#>

#> $Accuracy_Barchart

#>

#> $Accuracy_Barchart

#>

#> $Overfitting_barchart

#>

#> $Overfitting_barchart

#>

#> $Duration_barchart

#>

#> $Duration_barchart

#>

#> $Data_summary

#> Area Perimeter MajorAxisLength MinorAxisLength

#> Min. : 20548 Min. : 524.7 Min. :184.0 Min. :137.0

#> 1st Qu.: 36681 1st Qu.: 708.1 1st Qu.:254.0 1st Qu.:175.4

#> Median : 44716 Median : 793.3 Median :299.2 Median :192.8

#> Mean : 53289 Mean : 856.0 Mean :320.6 Mean :202.7

#> 3rd Qu.: 61247 3rd Qu.: 969.4 3rd Qu.:376.5 3rd Qu.:215.8

#> Max. :226806 Max. :1845.9 Max. :714.0 Max. :428.7

#>

#> AspectRation Eccentricity ConvexArea EquivDiameter

#> Min. :1.025 Min. :0.2190 Min. : 20825 Min. :161.7

#> 1st Qu.:1.436 1st Qu.:0.7175 1st Qu.: 37052 1st Qu.:216.1

#> Median :1.550 Median :0.7642 Median : 45261 Median :238.6

#> Mean :1.583 Mean :0.7517 Mean : 53997 Mean :253.6

#> 3rd Qu.:1.712 3rd Qu.:0.8117 3rd Qu.: 62159 3rd Qu.:279.3

#> Max. :2.389 Max. :0.9082 Max. :229994 Max. :537.4

#>

#> Extent Solidity roundness Compactness

#> Min. :0.5802 Min. :0.9551 Min. :0.5718 Min. :0.6454

#> 1st Qu.:0.7240 1st Qu.:0.9859 1st Qu.:0.8320 1st Qu.:0.7619

#> Median :0.7606 Median :0.9886 Median :0.8833 Median :0.8013

#> Mean :0.7519 Mean :0.9874 Mean :0.8750 Mean :0.7997

#> 3rd Qu.:0.7887 3rd Qu.:0.9903 3rd Qu.:0.9191 3rd Qu.:0.8331

#> Max. :0.8325 Max. :0.9937 Max. :0.9879 Max. :0.9873

#>

#> ShapeFactor1 ShapeFactor2 ShapeFactor3 ShapeFactor4

#> Min. :0.002998 Min. :0.0006017 Min. :0.4165 Min. :0.9550

#> 1st Qu.:0.005941 1st Qu.:0.0011528 1st Qu.:0.5805 1st Qu.:0.9941

#> Median :0.006645 Median :0.0016810 Median :0.6421 Median :0.9966

#> Mean :0.006553 Mean :0.0017100 Mean :0.6432 Mean :0.9952

#> 3rd Qu.:0.007283 3rd Qu.:0.0021681 3rd Qu.:0.6941 3rd Qu.:0.9980

#> Max. :0.009328 Max. :0.0035064 Max. :0.9748 Max. :0.9996

#>

#> y

#> BARBUNYA: 79

#> BOMBAY : 31

#> CALI : 97

#> DERMASON:212

#> HOROZ :115

#> SEKER :121

#> SIRA :158

#>

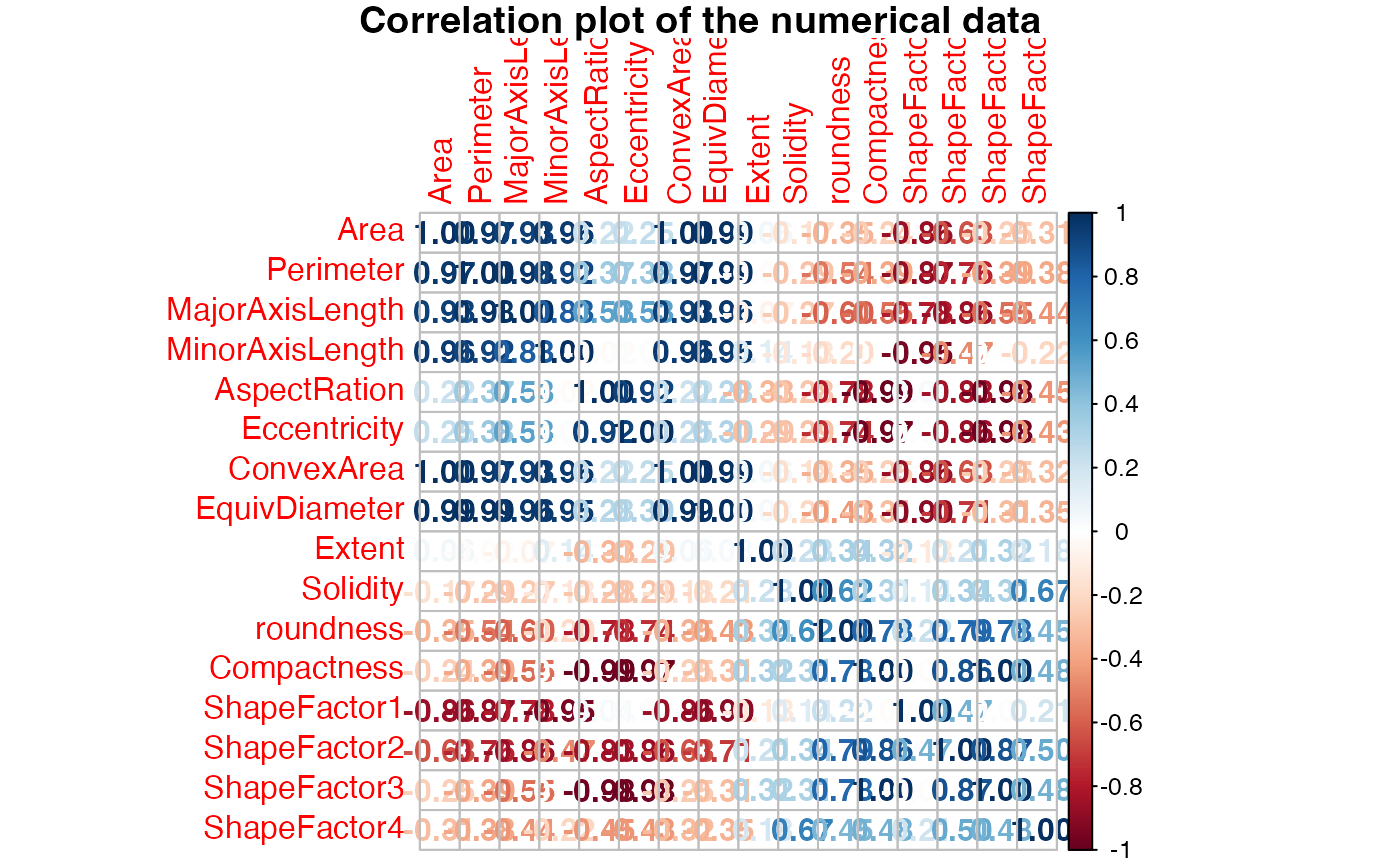

#> $Correlation_matrix

#>

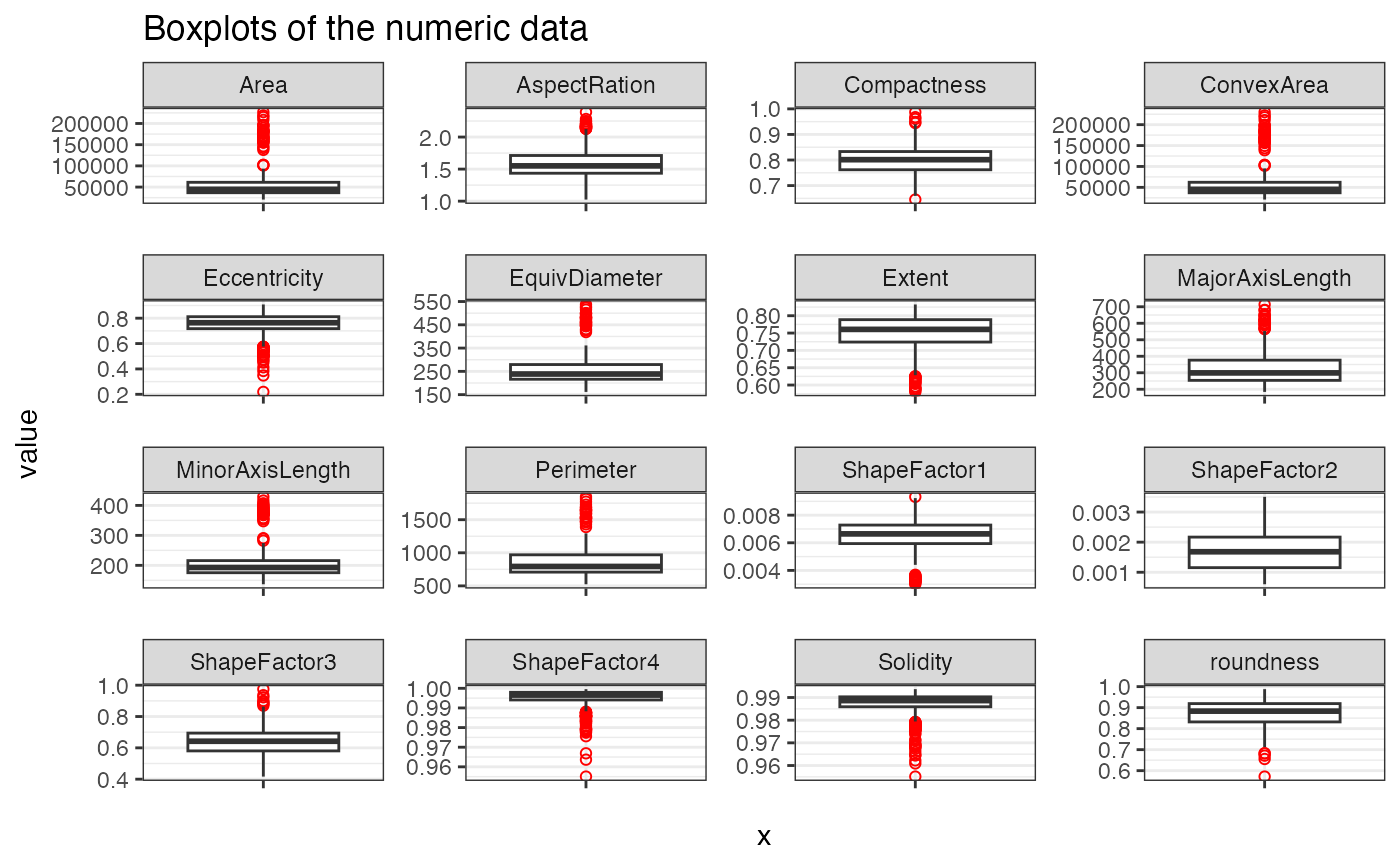

#> $Boxplots

#>

#> $Data_summary

#> Area Perimeter MajorAxisLength MinorAxisLength

#> Min. : 20548 Min. : 524.7 Min. :184.0 Min. :137.0

#> 1st Qu.: 36681 1st Qu.: 708.1 1st Qu.:254.0 1st Qu.:175.4

#> Median : 44716 Median : 793.3 Median :299.2 Median :192.8

#> Mean : 53289 Mean : 856.0 Mean :320.6 Mean :202.7

#> 3rd Qu.: 61247 3rd Qu.: 969.4 3rd Qu.:376.5 3rd Qu.:215.8

#> Max. :226806 Max. :1845.9 Max. :714.0 Max. :428.7

#>

#> AspectRation Eccentricity ConvexArea EquivDiameter

#> Min. :1.025 Min. :0.2190 Min. : 20825 Min. :161.7

#> 1st Qu.:1.436 1st Qu.:0.7175 1st Qu.: 37052 1st Qu.:216.1

#> Median :1.550 Median :0.7642 Median : 45261 Median :238.6

#> Mean :1.583 Mean :0.7517 Mean : 53997 Mean :253.6

#> 3rd Qu.:1.712 3rd Qu.:0.8117 3rd Qu.: 62159 3rd Qu.:279.3

#> Max. :2.389 Max. :0.9082 Max. :229994 Max. :537.4

#>

#> Extent Solidity roundness Compactness

#> Min. :0.5802 Min. :0.9551 Min. :0.5718 Min. :0.6454

#> 1st Qu.:0.7240 1st Qu.:0.9859 1st Qu.:0.8320 1st Qu.:0.7619

#> Median :0.7606 Median :0.9886 Median :0.8833 Median :0.8013

#> Mean :0.7519 Mean :0.9874 Mean :0.8750 Mean :0.7997

#> 3rd Qu.:0.7887 3rd Qu.:0.9903 3rd Qu.:0.9191 3rd Qu.:0.8331

#> Max. :0.8325 Max. :0.9937 Max. :0.9879 Max. :0.9873

#>

#> ShapeFactor1 ShapeFactor2 ShapeFactor3 ShapeFactor4

#> Min. :0.002998 Min. :0.0006017 Min. :0.4165 Min. :0.9550

#> 1st Qu.:0.005941 1st Qu.:0.0011528 1st Qu.:0.5805 1st Qu.:0.9941

#> Median :0.006645 Median :0.0016810 Median :0.6421 Median :0.9966

#> Mean :0.006553 Mean :0.0017100 Mean :0.6432 Mean :0.9952

#> 3rd Qu.:0.007283 3rd Qu.:0.0021681 3rd Qu.:0.6941 3rd Qu.:0.9980

#> Max. :0.009328 Max. :0.0035064 Max. :0.9748 Max. :0.9996

#>

#> y

#> BARBUNYA: 79

#> BOMBAY : 31

#> CALI : 97

#> DERMASON:212

#> HOROZ :115

#> SEKER :121

#> SIRA :158

#>

#> $Correlation_matrix

#>

#> $Boxplots

#>

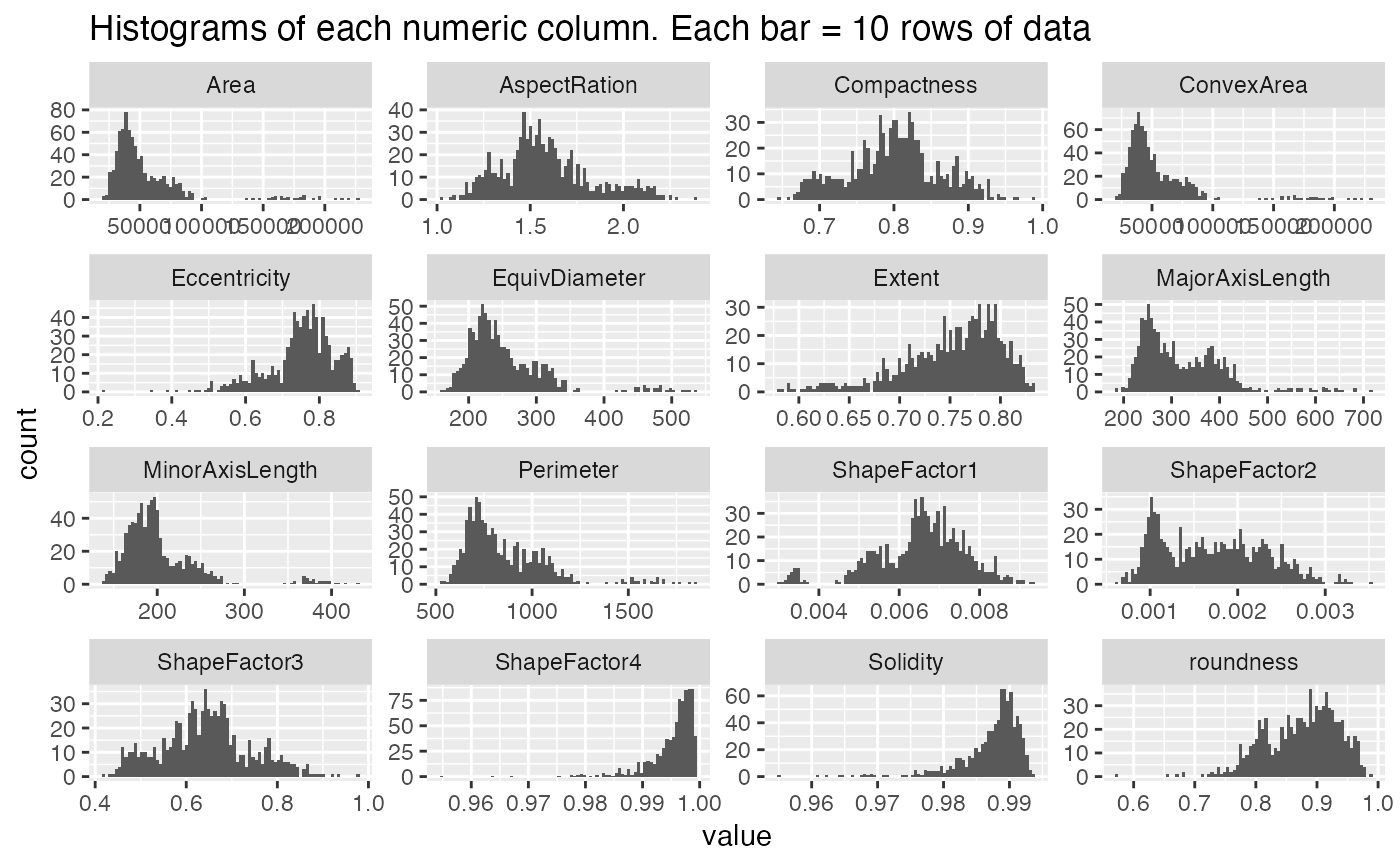

#> $Histograms

#>

#> $Histograms

#>

#> $Head_of_data

#>

#> $Head_of_ensemble

#>

#> $Summary_tables

#> $Summary_tables$ADABag

#> y_test

#> adabag_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 59 0 5 0 0 0 0

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 4 0 70 0 1 0 1

#> DERMASON 0 0 0 149 0 2 18

#> HOROZ 1 0 3 0 90 0 0

#> SEKER 0 0 0 3 0 97 1

#> SIRA 1 0 2 14 1 0 115

#>

#> $Summary_tables$Bagging

#> y_test

#> bagging_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 61 0 3 0 0 0 1

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 3 0 72 0 0 0 1

#> DERMASON 0 0 0 149 0 2 27

#> HOROZ 0 0 3 0 91 0 0

#> SEKER 0 0 0 3 0 97 1

#> SIRA 1 0 2 14 1 0 105

#>

#> $Summary_tables$`Bagged Random Forest`

#> y_test

#> bag_rf_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 60 0 4 0 0 0 0

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 4 0 71 0 0 0 1

#> DERMASON 0 0 0 151 0 2 19

#> HOROZ 0 0 3 0 91 0 0

#> SEKER 0 0 0 3 0 97 1

#> SIRA 1 0 2 12 1 0 114

#>

#> $Summary_tables$C50

#> y_test

#> C50_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 51 0 7 0 1 1 2

#> BOMBAY 1 30 0 0 0 0 0

#> CALI 8 0 68 0 1 0 1

#> DERMASON 0 0 0 148 0 3 29

#> HOROZ 1 0 3 0 89 0 5

#> SEKER 1 0 0 8 0 95 2

#> SIRA 3 0 2 10 1 0 96

#>

#> $Summary_tables$Linear

#> y_test

#> linear_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 55 0 0 0 1 1 2

#> BOMBAY 0 28 0 0 0 0 0

#> CALI 3 2 71 0 2 0 0

#> DERMASON 0 0 0 149 1 1 12

#> HOROZ 0 0 3 0 88 0 1

#> SEKER 1 0 0 1 0 95 1

#> SIRA 6 0 6 16 0 2 119

#>

#> $Summary_tables$`Naive Bayes`

#> y_test

#> n_bayes_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 57 0 9 0 0 1 2

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 5 0 66 0 0 0 0

#> DERMASON 0 0 0 142 0 1 10

#> HOROZ 2 0 3 1 91 0 2

#> SEKER 0 0 0 3 0 95 4

#> SIRA 1 0 2 20 1 2 117

#>

#> $Summary_tables$`Partial Least Sqaures`

#> y_test

#> pls_test_predict BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 0 0 0 0 0 0 0

#> BOMBAY 0 19 5 0 0 0 0

#> CALI 42 11 56 0 3 0 0

#> DERMASON 23 0 19 166 89 99 135

#> HOROZ 0 0 0 0 0 0 0

#> SEKER 0 0 0 0 0 0 0

#> SIRA 0 0 0 0 0 0 0

#>

#> $Summary_tables$`Penalized Discriminant Ananysis`

#> y_test

#> pda_test_predict BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 56 0 0 0 1 1 0

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 1 0 73 0 0 0 0

#> DERMASON 0 0 0 141 0 1 10

#> HOROZ 0 0 2 0 90 0 0

#> SEKER 1 0 0 1 0 94 1

#> SIRA 7 0 5 24 1 3 124

#>

#> $Summary_tables$`Random Forest`

#> y_test

#> rf_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 58 0 6 0 0 0 0

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 4 0 69 0 0 0 0

#> DERMASON 0 0 0 153 1 2 21

#> HOROZ 0 0 3 0 91 0 1

#> SEKER 0 0 0 3 0 97 1

#> SIRA 3 0 2 10 0 0 112

#>

#> $Summary_tables$Ranger

#> y_test

#> ranger_test_predict BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 58 0 7 0 0 1 0

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 4 0 68 0 0 0 0

#> DERMASON 0 0 0 147 1 2 19

#> HOROZ 0 0 3 0 91 0 1

#> SEKER 0 0 0 3 0 96 0

#> SIRA 3 0 2 16 0 0 115

#>

#> $Summary_tables$`Regularized Discriminant Analysis`

#> y_test

#> BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 24 0 30 0 9 0 0

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 28 0 41 0 0 0 0

#> DERMASON 0 0 0 130 0 31 8

#> HOROZ 10 0 9 0 56 2 26

#> SEKER 0 0 0 35 6 44 32

#> SIRA 3 0 0 1 21 22 69

#>

#> $Summary_tables$RPart

#> y_test

#> rpart_test_predict BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 53 2 12 0 1 0 0

#> BOMBAY 0 17 0 0 0 0 0

#> CALI 8 11 63 0 1 0 2

#> DERMASON 0 0 0 139 0 1 16

#> HOROZ 0 0 3 0 87 0 0

#> SEKER 0 0 0 2 0 95 1

#> SIRA 4 0 2 25 3 3 116

#>

#> $Summary_tables$`Support Vector Machines`

#> y_test

#> svm_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 50 0 3 0 0 0 0

#> BOMBAY 0 8 0 0 0 0 0

#> CALI 2 0 61 0 0 0 0

#> DERMASON 0 0 0 151 0 2 18

#> HOROZ 10 22 14 3 92 3 8

#> SEKER 0 0 0 0 0 94 1

#> SIRA 3 0 2 12 0 0 108

#>

#> $Summary_tables$Trees

#> y_test

#> tree_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 52 0 6 0 0 0 3

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 9 0 69 0 2 1 0

#> DERMASON 0 0 0 163 1 5 34

#> HOROZ 0 0 3 0 88 0 2

#> SEKER 0 0 0 1 0 92 1

#> SIRA 4 0 2 2 1 1 95

#>

#> $Summary_tables$XGBoost

#>

#> BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 54 0 5 0 0 0 1

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 2 0 68 0 0 0 0

#> DERMASON 0 0 0 150 1 1 18

#> HOROZ 0 0 4 0 91 0 0

#> SEKER 3 0 0 2 0 96 0

#> SIRA 6 0 3 14 0 2 116

#>

#> $Summary_tables$`Ensemble ADABag`

#> ensemble_y_test

#> ensemble_adabag_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 3 1 4 4 2 3 4

#> BOMBAY 0 0 0 5 1 2 0

#> CALI 6 2 5 10 11 6 9

#> DERMASON 12 10 14 28 16 19 20

#> HOROZ 3 0 2 6 4 7 3

#> SEKER 1 2 2 5 3 1 3

#> SIRA 1 1 1 7 3 1 7

#>

#> $Summary_tables$`Ensemble Bagged Cart`

#>

#> ensemble_bag_cart_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 1 1 1 0 0 1 0

#> BOMBAY 0 0 0 4 1 2 2

#> CALI 4 3 3 8 7 4 5

#> DERMASON 16 10 18 33 20 22 27

#> HOROZ 3 1 1 4 5 8 5

#> SEKER 0 1 4 7 4 1 2

#> SIRA 2 0 1 9 3 1 5

#>

#> $Summary_tables$`Ensemble Bagged Random Forest`

#>

#> ensemble_bag_rf_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 26 0 0 0 0 0 0

#> BOMBAY 0 16 0 0 0 0 0

#> CALI 0 0 28 0 0 0 0

#> DERMASON 0 0 0 65 0 0 0

#> HOROZ 0 0 0 0 40 0 0

#> SEKER 0 0 0 0 0 39 0

#> SIRA 0 0 0 0 0 0 46

#>

#> $Summary_tables$`Ensemble C50`

#> ensemble_y_test

#> ensemble_C50_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 26 0 0 0 0 0 0

#> BOMBAY 0 16 0 0 0 0 0

#> CALI 0 0 28 0 0 0 0

#> DERMASON 0 0 0 65 0 0 0

#> HOROZ 0 0 0 0 40 0 0

#> SEKER 0 0 0 0 0 39 0

#> SIRA 0 0 0 0 0 0 46

#>

#> $Summary_tables$`Ensemble Naive Bayes`

#> ensemble_y_test

#> ensemble_n_bayes_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 14 3 6 7 5 0 7

#> BOMBAY 3 9 0 3 3 6 8

#> CALI 1 0 14 2 0 1 0

#> DERMASON 1 3 4 48 3 2 1

#> HOROZ 6 1 1 5 26 4 3

#> SEKER 0 0 0 0 2 26 0

#> SIRA 1 0 3 0 1 0 27

#>

#> $Summary_tables$`Ensemble Ranger`

#> ensemble_y_test

#> ensemble_ranger_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 1 0 1 0 0 0 1

#> BOMBAY 0 0 0 0 0 0 0

#> CALI 4 2 3 6 5 5 7

#> DERMASON 9 12 15 32 15 18 15

#> HOROZ 1 1 0 4 4 6 4

#> SEKER 4 0 6 8 4 6 6

#> SIRA 7 1 3 15 12 4 13

#>

#> $Summary_tables$`Ensemble Random Forest`

#> ensemble_y_test

#> ensemble_rf_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 17 1 0 0 1 0 0

#> BOMBAY 0 1 0 0 0 0 0

#> CALI 1 0 24 0 2 0 3

#> DERMASON 0 0 0 65 0 0 0

#> HOROZ 1 2 2 0 30 2 0

#> SEKER 1 3 1 0 4 30 0

#> SIRA 6 9 1 0 3 7 43

#>

#> $Summary_tables$`Ensemble Regularized Discriminant Analysis`

#> ensemble_y_test

#> BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 25 0 0 0 0 0 0

#> BOMBAY 0 16 0 0 0 0 0

#> CALI 0 0 28 0 0 0 0

#> DERMASON 0 0 0 65 0 0 0

#> HOROZ 1 0 0 0 40 0 0

#> SEKER 0 0 0 0 0 39 0

#> SIRA 0 0 0 0 0 0 46

#>

#> $Summary_tables$`Ensemble Support Vector Machines`

#> ensemble_y_test

#> ensemble_svm_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 12 0 0 0 0 0 0

#> BOMBAY 0 3 0 0 0 0 0

#> CALI 0 0 20 0 0 0 0

#> DERMASON 9 10 5 60 13 8 9

#> HOROZ 0 0 0 0 24 0 0

#> SEKER 0 0 0 0 0 22 0

#> SIRA 5 3 3 5 3 9 37

#>

#> $Summary_tables$`Ensemble Trees`

#> ensemble_y_test

#> ensemble_tree_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 0 0 0 0 0 0 0

#> BOMBAY 0 0 0 0 0 0 0

#> CALI 3 1 2 7 3 4 4

#> DERMASON 8 10 14 26 14 14 15

#> HOROZ 1 3 0 5 3 8 4

#> SEKER 6 1 7 9 8 6 8

#> SIRA 8 1 5 18 12 7 15

#>

#>

#> $Accuracy_plot

#>

#> $Head_of_data

#>

#> $Head_of_ensemble

#>

#> $Summary_tables

#> $Summary_tables$ADABag

#> y_test

#> adabag_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 59 0 5 0 0 0 0

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 4 0 70 0 1 0 1

#> DERMASON 0 0 0 149 0 2 18

#> HOROZ 1 0 3 0 90 0 0

#> SEKER 0 0 0 3 0 97 1

#> SIRA 1 0 2 14 1 0 115

#>

#> $Summary_tables$Bagging

#> y_test

#> bagging_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 61 0 3 0 0 0 1

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 3 0 72 0 0 0 1

#> DERMASON 0 0 0 149 0 2 27

#> HOROZ 0 0 3 0 91 0 0

#> SEKER 0 0 0 3 0 97 1

#> SIRA 1 0 2 14 1 0 105

#>

#> $Summary_tables$`Bagged Random Forest`

#> y_test

#> bag_rf_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 60 0 4 0 0 0 0

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 4 0 71 0 0 0 1

#> DERMASON 0 0 0 151 0 2 19

#> HOROZ 0 0 3 0 91 0 0

#> SEKER 0 0 0 3 0 97 1

#> SIRA 1 0 2 12 1 0 114

#>

#> $Summary_tables$C50

#> y_test

#> C50_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 51 0 7 0 1 1 2

#> BOMBAY 1 30 0 0 0 0 0

#> CALI 8 0 68 0 1 0 1

#> DERMASON 0 0 0 148 0 3 29

#> HOROZ 1 0 3 0 89 0 5

#> SEKER 1 0 0 8 0 95 2

#> SIRA 3 0 2 10 1 0 96

#>

#> $Summary_tables$Linear

#> y_test

#> linear_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 55 0 0 0 1 1 2

#> BOMBAY 0 28 0 0 0 0 0

#> CALI 3 2 71 0 2 0 0

#> DERMASON 0 0 0 149 1 1 12

#> HOROZ 0 0 3 0 88 0 1

#> SEKER 1 0 0 1 0 95 1

#> SIRA 6 0 6 16 0 2 119

#>

#> $Summary_tables$`Naive Bayes`

#> y_test

#> n_bayes_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 57 0 9 0 0 1 2

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 5 0 66 0 0 0 0

#> DERMASON 0 0 0 142 0 1 10

#> HOROZ 2 0 3 1 91 0 2

#> SEKER 0 0 0 3 0 95 4

#> SIRA 1 0 2 20 1 2 117

#>

#> $Summary_tables$`Partial Least Sqaures`

#> y_test

#> pls_test_predict BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 0 0 0 0 0 0 0

#> BOMBAY 0 19 5 0 0 0 0

#> CALI 42 11 56 0 3 0 0

#> DERMASON 23 0 19 166 89 99 135

#> HOROZ 0 0 0 0 0 0 0

#> SEKER 0 0 0 0 0 0 0

#> SIRA 0 0 0 0 0 0 0

#>

#> $Summary_tables$`Penalized Discriminant Ananysis`

#> y_test

#> pda_test_predict BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 56 0 0 0 1 1 0

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 1 0 73 0 0 0 0

#> DERMASON 0 0 0 141 0 1 10

#> HOROZ 0 0 2 0 90 0 0

#> SEKER 1 0 0 1 0 94 1

#> SIRA 7 0 5 24 1 3 124

#>

#> $Summary_tables$`Random Forest`

#> y_test

#> rf_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 58 0 6 0 0 0 0

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 4 0 69 0 0 0 0

#> DERMASON 0 0 0 153 1 2 21

#> HOROZ 0 0 3 0 91 0 1

#> SEKER 0 0 0 3 0 97 1

#> SIRA 3 0 2 10 0 0 112

#>

#> $Summary_tables$Ranger

#> y_test

#> ranger_test_predict BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 58 0 7 0 0 1 0

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 4 0 68 0 0 0 0

#> DERMASON 0 0 0 147 1 2 19

#> HOROZ 0 0 3 0 91 0 1

#> SEKER 0 0 0 3 0 96 0

#> SIRA 3 0 2 16 0 0 115

#>

#> $Summary_tables$`Regularized Discriminant Analysis`

#> y_test

#> BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 24 0 30 0 9 0 0

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 28 0 41 0 0 0 0

#> DERMASON 0 0 0 130 0 31 8

#> HOROZ 10 0 9 0 56 2 26

#> SEKER 0 0 0 35 6 44 32

#> SIRA 3 0 0 1 21 22 69

#>

#> $Summary_tables$RPart

#> y_test

#> rpart_test_predict BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 53 2 12 0 1 0 0

#> BOMBAY 0 17 0 0 0 0 0

#> CALI 8 11 63 0 1 0 2

#> DERMASON 0 0 0 139 0 1 16

#> HOROZ 0 0 3 0 87 0 0

#> SEKER 0 0 0 2 0 95 1

#> SIRA 4 0 2 25 3 3 116

#>

#> $Summary_tables$`Support Vector Machines`

#> y_test

#> svm_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 50 0 3 0 0 0 0

#> BOMBAY 0 8 0 0 0 0 0

#> CALI 2 0 61 0 0 0 0

#> DERMASON 0 0 0 151 0 2 18

#> HOROZ 10 22 14 3 92 3 8

#> SEKER 0 0 0 0 0 94 1

#> SIRA 3 0 2 12 0 0 108

#>

#> $Summary_tables$Trees

#> y_test

#> tree_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 52 0 6 0 0 0 3

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 9 0 69 0 2 1 0

#> DERMASON 0 0 0 163 1 5 34

#> HOROZ 0 0 3 0 88 0 2

#> SEKER 0 0 0 1 0 92 1

#> SIRA 4 0 2 2 1 1 95

#>

#> $Summary_tables$XGBoost

#>

#> BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 54 0 5 0 0 0 1

#> BOMBAY 0 30 0 0 0 0 0

#> CALI 2 0 68 0 0 0 0

#> DERMASON 0 0 0 150 1 1 18

#> HOROZ 0 0 4 0 91 0 0

#> SEKER 3 0 0 2 0 96 0

#> SIRA 6 0 3 14 0 2 116

#>

#> $Summary_tables$`Ensemble ADABag`

#> ensemble_y_test

#> ensemble_adabag_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 3 1 4 4 2 3 4

#> BOMBAY 0 0 0 5 1 2 0

#> CALI 6 2 5 10 11 6 9

#> DERMASON 12 10 14 28 16 19 20

#> HOROZ 3 0 2 6 4 7 3

#> SEKER 1 2 2 5 3 1 3

#> SIRA 1 1 1 7 3 1 7

#>

#> $Summary_tables$`Ensemble Bagged Cart`

#>

#> ensemble_bag_cart_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 1 1 1 0 0 1 0

#> BOMBAY 0 0 0 4 1 2 2

#> CALI 4 3 3 8 7 4 5

#> DERMASON 16 10 18 33 20 22 27

#> HOROZ 3 1 1 4 5 8 5

#> SEKER 0 1 4 7 4 1 2

#> SIRA 2 0 1 9 3 1 5

#>

#> $Summary_tables$`Ensemble Bagged Random Forest`

#>

#> ensemble_bag_rf_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 26 0 0 0 0 0 0

#> BOMBAY 0 16 0 0 0 0 0

#> CALI 0 0 28 0 0 0 0

#> DERMASON 0 0 0 65 0 0 0

#> HOROZ 0 0 0 0 40 0 0

#> SEKER 0 0 0 0 0 39 0

#> SIRA 0 0 0 0 0 0 46

#>

#> $Summary_tables$`Ensemble C50`

#> ensemble_y_test

#> ensemble_C50_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 26 0 0 0 0 0 0

#> BOMBAY 0 16 0 0 0 0 0

#> CALI 0 0 28 0 0 0 0

#> DERMASON 0 0 0 65 0 0 0

#> HOROZ 0 0 0 0 40 0 0

#> SEKER 0 0 0 0 0 39 0

#> SIRA 0 0 0 0 0 0 46

#>

#> $Summary_tables$`Ensemble Naive Bayes`

#> ensemble_y_test

#> ensemble_n_bayes_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 14 3 6 7 5 0 7

#> BOMBAY 3 9 0 3 3 6 8

#> CALI 1 0 14 2 0 1 0

#> DERMASON 1 3 4 48 3 2 1

#> HOROZ 6 1 1 5 26 4 3

#> SEKER 0 0 0 0 2 26 0

#> SIRA 1 0 3 0 1 0 27

#>

#> $Summary_tables$`Ensemble Ranger`

#> ensemble_y_test

#> ensemble_ranger_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 1 0 1 0 0 0 1

#> BOMBAY 0 0 0 0 0 0 0

#> CALI 4 2 3 6 5 5 7

#> DERMASON 9 12 15 32 15 18 15

#> HOROZ 1 1 0 4 4 6 4

#> SEKER 4 0 6 8 4 6 6

#> SIRA 7 1 3 15 12 4 13

#>

#> $Summary_tables$`Ensemble Random Forest`

#> ensemble_y_test

#> ensemble_rf_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 17 1 0 0 1 0 0

#> BOMBAY 0 1 0 0 0 0 0

#> CALI 1 0 24 0 2 0 3

#> DERMASON 0 0 0 65 0 0 0

#> HOROZ 1 2 2 0 30 2 0

#> SEKER 1 3 1 0 4 30 0

#> SIRA 6 9 1 0 3 7 43

#>

#> $Summary_tables$`Ensemble Regularized Discriminant Analysis`

#> ensemble_y_test

#> BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 25 0 0 0 0 0 0

#> BOMBAY 0 16 0 0 0 0 0

#> CALI 0 0 28 0 0 0 0

#> DERMASON 0 0 0 65 0 0 0

#> HOROZ 1 0 0 0 40 0 0

#> SEKER 0 0 0 0 0 39 0

#> SIRA 0 0 0 0 0 0 46

#>

#> $Summary_tables$`Ensemble Support Vector Machines`

#> ensemble_y_test

#> ensemble_svm_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 12 0 0 0 0 0 0

#> BOMBAY 0 3 0 0 0 0 0

#> CALI 0 0 20 0 0 0 0

#> DERMASON 9 10 5 60 13 8 9

#> HOROZ 0 0 0 0 24 0 0

#> SEKER 0 0 0 0 0 22 0

#> SIRA 5 3 3 5 3 9 37

#>

#> $Summary_tables$`Ensemble Trees`

#> ensemble_y_test

#> ensemble_tree_test_pred BARBUNYA BOMBAY CALI DERMASON HOROZ SEKER SIRA

#> BARBUNYA 0 0 0 0 0 0 0

#> BOMBAY 0 0 0 0 0 0 0

#> CALI 3 1 2 7 3 4 4

#> DERMASON 8 10 14 26 14 14 15

#> HOROZ 1 3 0 5 3 8 4

#> SEKER 6 1 7 9 8 6 8

#> SIRA 8 1 5 18 12 7 15

#>

#>

#> $Accuracy_plot

#>

#> $Total_plot

#>

#> $Total_plot

#>

#> $Overfitting_plot

#>

#> $Overfitting_plot

#>

Classification(data = Maternal_Health_Risk,

colnum = 7,

numresamples = 10,

do_you_have_new_data = "N",

how_to_handle_strings = 1,

save_all_trained_models = "N",

use_parallel = "N",

train_amount = 0.60,

test_amount = 0.20,

validation_amount = 0.20)

#>

Classification(data = Maternal_Health_Risk,

colnum = 7,

numresamples = 10,

do_you_have_new_data = "N",

how_to_handle_strings = 1,

save_all_trained_models = "N",

use_parallel = "N",

train_amount = 0.60,

test_amount = 0.20,

validation_amount = 0.20)

#> [1]

#> [1] "Resampling number 1 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 2 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 3 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 4 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 5 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 6 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 7 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 8 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 9 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 10 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> $Final_results

#>

#> $Barchart

#> [1]

#> [1] "Resampling number 1 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 2 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 3 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 4 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 5 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 6 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 7 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 8 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 9 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]

#> [1] "Working on Ensembles with Ensemble ADA Bag analysis"

#> [1] "Working on Ensemble Bagged CART analysis"

#> [1] "Working on Ensemble Bagged Random Forest analysis"

#> [1] "Working on Ensemble C50 analysis"

#> [1] "Working on Ensembles using Naive Bayes analysis"

#> [1] "Working on Ensembles using Ranger analysis"

#> [1] "Working on Ensembles using Random Forest analysis"

#> [1] "Working on Ensembles using Regularized Discriminant analysis"

#> [1] "Working on Ensembles using Support Vector Machines analysis"

#> [1] "Working on Ensembles using Trees analysis"

#> [1]

#> [1] "Resampling number 10 of 10,"

#> [1]

#> [1] "Working on ADA Bag analysis"

#> [1] "Working on Bagging analysis"

#> [1] "Working on Bagged Random Forest analysis"

#> [1] "Working on C50 analysis"

#> [1] "Working on Linear analysis"

#> [1] "Working on Naive Bayes analysis"

#> [1] "Working on Partial Least Squares analysis"

#> [1] "Working on Penalized Discriminant analysis"

#> [1] "Working on Random Forest analysis"

#> [1] "Working on Ranger analysis"

#> [1] "Working on Regularized Discriminant analysis"

#> [1] "Working on RPart analysis"

#> [1] "Working on Support Vector Machine analysis"

#> [1] "Working on Trees analysis"

#> [1] "Working on XGBoost analysis"

#> [1]

#> [1] "Working on the Ensembles section"

#> [1]